Here’s what ASCII is and how ASCII is used. Computers use ASCII, a table of characters.

The English alphabet, numbers, and other common symbols are encoded in the ASCII table as binary code.

The characters in computers are not stored as characters but as series of binary bits: 1s and 0s.

For example, 01000001 means “A” because ASCII says so.

If you want to learn all about ASCII and what ASCII used for exactly, then you are in the right place.

Let’s get started!

Have You Ever Heard of ASCII?

American Standard Code for Information Interchange is a method of character encoding used in electronic communication.

That’s the short ASCII definition—but there’s way more to say on the topic, as this guide will show you.

Since the full name is quite a mouthful, the term is usually abbreviated simply to ASCII.

You may also see it referred to as US-ASCII.

That’s the term preferred by the Internet Assigned Numbers Authority, IANA.

The standard ASCII code serves telecommunications devices, computers, and other technology.

Most of the modern character-encoding schemes used today are rooted in ASCII such as UTF-8 and ISO-8859-1.

This guide explains what ASCII code is, how it’s used, why it’s important, the history of ASCII, and its purposes today.

What Is ASCII?

So just what is ASCII code?

First, ASCII stands for American Standard Code for Information Interchange.

By the way, ASCII is pronounced “as-key” (just in case you ever have to say it aloud).

Now that you’ve got the terminology down, on to your question.

ASCII is a standard coding system that assigns numbers, letters, and symbols to the 256 slots in an 8-bit code—you’ll learn further below what 8-bit exactly is.

The ASCII decimal is made up of binary, the language used by computers.

ASCII corresponds to the English alphabet.

The basic ASCII table includes 128 characters specified into 7-bit integers.

It’s possible to print 95 of the encoded characters.

These include digits like 0 to 9 and alphabetic letters from a to z, both lower and upper case, plus punctuation symbols.

The ASCII code also includes 33 control codes, which can’t be printed. These codes originated with teletype machines, and the majority of them are now obsolete.

There is also an extension to the basic ASCII table that adds another 128 characters.

Therefore, the basic and extended ASCII tables combined a total of 256 characters.

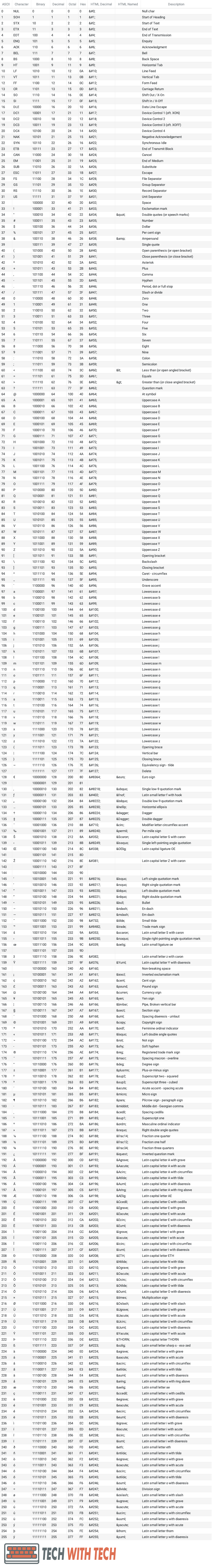

You find the complete ASCII table further below.

What Is ASCII Used For?

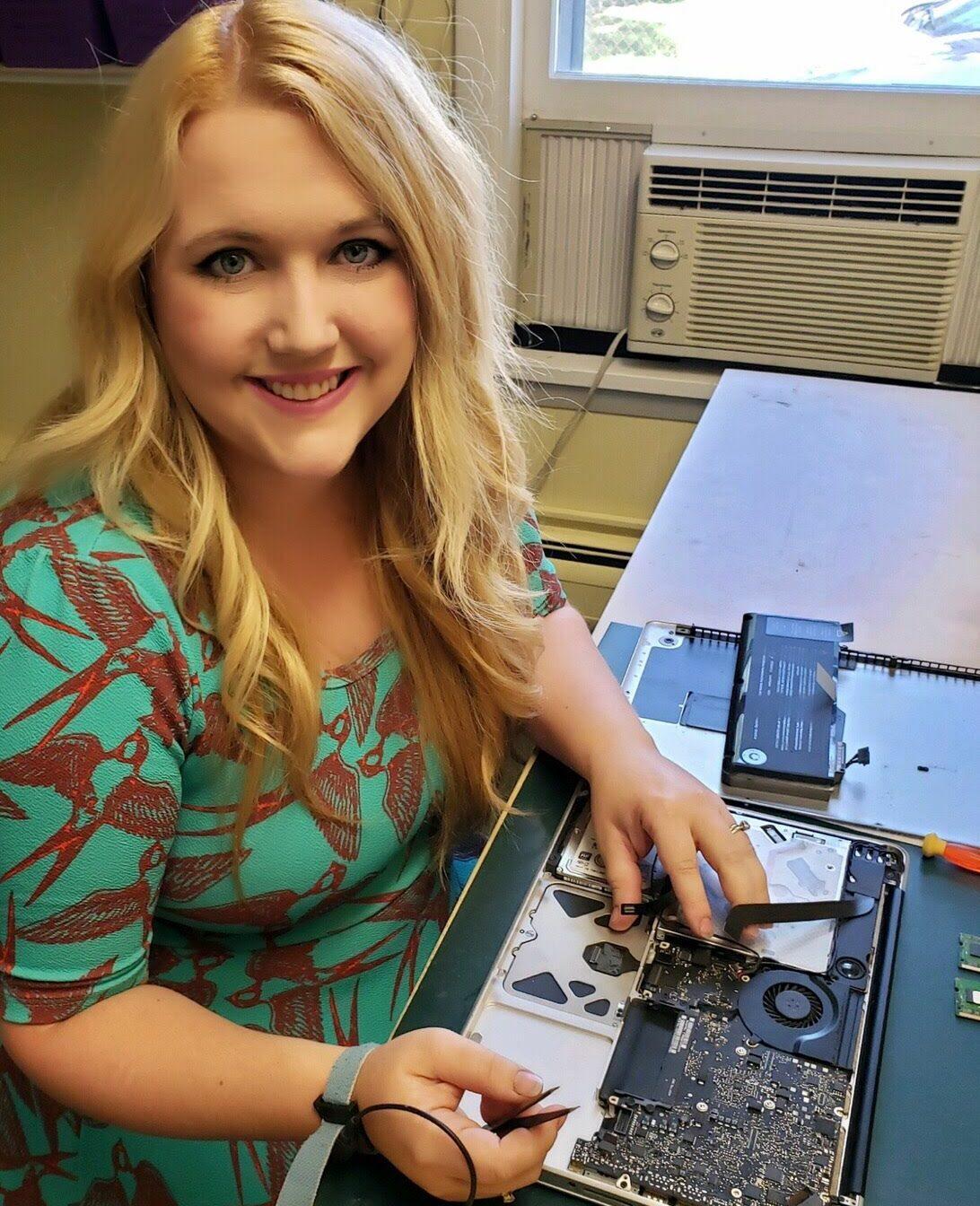

Computers don’t store characters as characters themselves. There doesn’t exist an image of each letter somewhere on the hard drive of your computer. Instead, each character is encoded as a series of binary bits: 1s and 0s.

For example, the code for the uppercase letter “A” is 01000001. But how is your computer supposed to know that 01000001 means the letter “A”? Here comes ASCII into play: 01000001 means “A” because ASCII says so.

And about what ASCII says, agreed the computer industry collectively: They developed a character encoding standard—ASCII. What a character encoding standard does is to specify all possible characters, and assign each character a string of bits.

What Is 8-Bit?

As mentioned, ASCII is a standard coding system that assigns numbers, letters, punctuation, and characters to the 256 slots of the 8-bit code.

Now, you are probably wondering, what is 8-bit?

8-bit is an early software program or computer hardware device.

It’s characterized by its ability to transfer eight bits of data simultaneously.

By the way, 8-bit is 1 byte as the next standard unit above a bit.

A bit is a basic unit of information in computing. A bit has one of two possible values. For example:

- 1 or 0

- + or –

- true or false

Therefore, a bit represents 2 values: 1 or 0.

2 bits together can represent 4 values: 0 and 0, 0 and 1, 1 and 1, 0 and 1.

3 bits together can represent 8 values. 3 bits means 2 to the power of 3: 2 * 2 * 2 = 8.

And so on.

Hence, an 8-bit can store 256 different values: 2 * 2 * 2 * 2 * 2 * 2 * 2 * 2 = 256.

Take the Intel 8080 processor, for instance. It was one of the first widely used computer processors. It ran on an 8-bit architecture. This means it could process 8 bits in one step.

To put that into context, today’s computer processors run on 64-bit architecture.

What Is an ASCII Table and What Does It Contain?

The ASCII code table consists of a comprehensive structure consisting of three sections:

- Non-printable: Non-printable codes consist of system codes between 0 and 31.

- Lower ASCII: Lower ASCII consists of system codes from 32 to 127. This part of the ASCII table finds its roots in the 7-bit character tables used in older American systems.

- Higher ASCII: Higher ASCII consists of codes from 128 to 255. This is the portion of the ASCII table that is programmable.

The characters originate from the operating system language of modern computer technologies.

You can view the full ASCII values and codes in tabular overviews that show the characters alongside their decimal, hexadecimal, octal, html, and binary meanings.

There is also the extended ASCII table.

It includes extended ASCII, which relies on eight bits instead of seven.

This adds another 128 characters, giving ASCII the ability to contain extra characters, including foreign language letters, drawn characters, and special symbols.

So, how many ASCII characters are there? It depends.

If you are looking at the rudimentary ASCII code table, there are 128 characters (7-bit).

If you’re looking at the extended ASCII table, you have to consider the added 128 characters, bringing the total to 256 (8-bit).

Here is the complete ASCII table with 256 entries—check out how the binaries of the entries before entry #128 have seven or fewer places and the binaries of the entries after entry #128 have eight places:

Complete ASCII Master Table

At the end of this article is a downloadable PDF version of this complete ASCII master table.

What Is the Difference Between ASCII 7-Bit and ASCII 8-Bit?

So, before going on, it’s important to distinguish between two types of ASCII:

7-bit ASCII versus 8-bit ASCII.

These are two different versions of ASCII.

You might hear reference to either one, so it’s essential to understand what each term means.

The 7-bit version is the original ASCII and contains 128 characters.

It encompasses both printable and non-printable characters from 0 to 127, plus the decimal number.

There is also 8-bit ASCII, which consists of 255 characters, plus the decimal number.

8-bit ASCII is also called extended ASCII. This is the modern accepted standard.

How Many ASCII Characters Are Printable?

Above, you saw a reference to printable and non-printable ASCII.

Which ASCII characters are printable?

“Printable” simply means that it’s possible to print all of the symbols given.

Any widely used ASCII code will be printable. Not all ASCII characters are printable, however.

So, how many ASCII characters can you print?

You can print 95 characters of 7-bit ASCII. 33 ASCII characters are non-printable.

A “non-printable” character represents a non-printable or non-written character.

These are also referred to as control characters.

Examples of non-printable ASCII include a line feed, form feed, carriage return, escape, delete, and backspace—you can check them out in the ASCII table above.

Who Assigns ASCII Values?

ASCII has many values corresponding to different characters. It even has a value for space.

The ASCII value of space in decimal is 32 and in binary is 10000. But just who assigns these values, and how are ASCII values assigned?

ASCII values are assigned by the INCITS (International Committee for Information Technology Standards).

This is a standards development organization, accredited by the ANSI (American National Standards Institute).

The INCITS is composed of information technology developers.

INCITS was initially known as X3 and NCITS.

You can learn more about this group, which is also behind ASCII’s standardization, in the section about the history of ASCII’s standardization below.

Why Is ASCII So Important?

Now you know the basics about ASCII, including what it is and who defines ASCII values.

You might still be confused as to why ASCII matters, however.

This section explains why it’s so important.

ASCII is essential in the modern world because it serves as the link between what a person sees on the computer screen versus what is on their computer hard drive.

Without ASCII, modern computing would be way more complex and far less streamlined.

ASCII provides a common language across all computers to establish this link between the screen and the hard drive.

ASCII’s Importance in Greater Detail: A Common Language for Computers

Are you still confused?

ASCII translates human text to computer text and vice versa.

Computers communicate via binary, a complex language consisting of a series of zeroes and ones (0, 1).

That’s the alphabet for a laptop.

Meanwhile, humans have their alphabet.

The English alphabet consists of the ABC’s, for example.

Other languages use other alphabets.

Russian uses the Cyrillic alphabet, for example.

In the past, computers had their versions of these different alphabets and languages.

Consider it like this:

While some computers used the computer equivalent of a Cyrillic alphabet, others used a computer-equivalent Latin alphabet (the one used for English).

This made it extremely difficult to establish communication and coherence between computers.

ASCII gave all computers one language, with one alphabet.

In this respect, ASCII was a revolutionary development, allowing computers to share files and documents, and paving the way for modern computer programming.

What Are Examples of ASCII in Action in Everyday Life?

ASCII files can serve as a common denominator for all kinds of data conversions.

For example, say a software program can’t convert its data to another software program format.

However, both programs can input and output ASCII files.

This means a conversion may be possible, even though the two software themselves aren’t compatible.

ASCII characters are also used when you send or receive an email.

The next section explores why ASCII code is used.

Why Is the ASCII Code Used?

You’ve got your answer to the question, “what is ASCII code?”

You’re probably still wondering what it’s for, however.

ASCII tables are widely used in computer circles.

They essentially serve as the “Google Translate” between humans and computer hard drives.

The hard drive is essentially the memory of the computer. It stores information on transistors or magnets, which can be switched on or off.

Hard drives rely on bytes.

A byte is a unit of digital information. It usually consists of eight bits. An 8-bit byte is commonly referred to as an octet.

An ASCII table is used to translate a byte of data (a binary code consisting of eight 0s and 1s) to a letter a human can read, like “a” or “A” or the number “2”.

Practically speaking, what is the purpose of ASCII?

ASCII tables are used across all different computer systems.

You can thus read a word document written on your PC, even if you’re using a Mac because of the ASCII code each system knows which binary stands for which character.

That’s just one of many examples of how ASCII makes modern life more manageable.

Where Is ASCII Stored?

Now, it makes sense that in order to use ASCII, computer hardware will have that handy ASCII table stored somewhere, right?

Not quite.

Computers can’t deal with characters as in ASCII.

All computers care about is their language, binary—computers only store numbers.

The association of an 8-bit number with a printable character that a human can understand is totally arbitrary to the laptop.

Want to understand how ASCII works and where it’s stored?

Then it’s critical to understand the role of a compiler.

The Compiler

In computer programming, a compiler is a type of program responsible for translating computer code that has been written in one programming language, referred to as the source language, into a different kind of language, known as the target language.

There are different kinds of compilers.

For example, a cross-compiler is able to run on a computer with an operating system that is different from another computer.

In contrast, a source-to-source compiler is able to translate between high-level programming languages.

The Relevance of Compilers to ASCII

Computer programs must perform text string manipulation regularly.

A text string is made up of characters, which is translated into machine code.

The compiler must first store each character in a location in the main memory, somewhere the CPU (Central Processing Unit) can access, to complete this translation.

Then, the compiler must generate machine instructions to write the actual characters on the screen.

Programming with characters is possible because a compiler accepts a source code, and the hardware running that source code agrees on how to map characters to numbers.

Encoding is used to determine that, for example, “A” equals 65.

It’s the compiler that stores characters.

Long story short, the ASCII table is managed by an operating system such as Windows, macOS, or Linux.

It doesn’t have a specific physical location such as a PDF file or your favorite picture from last summer.

How ASCII characters are placed in the main memory—that’s probably your next query, right?

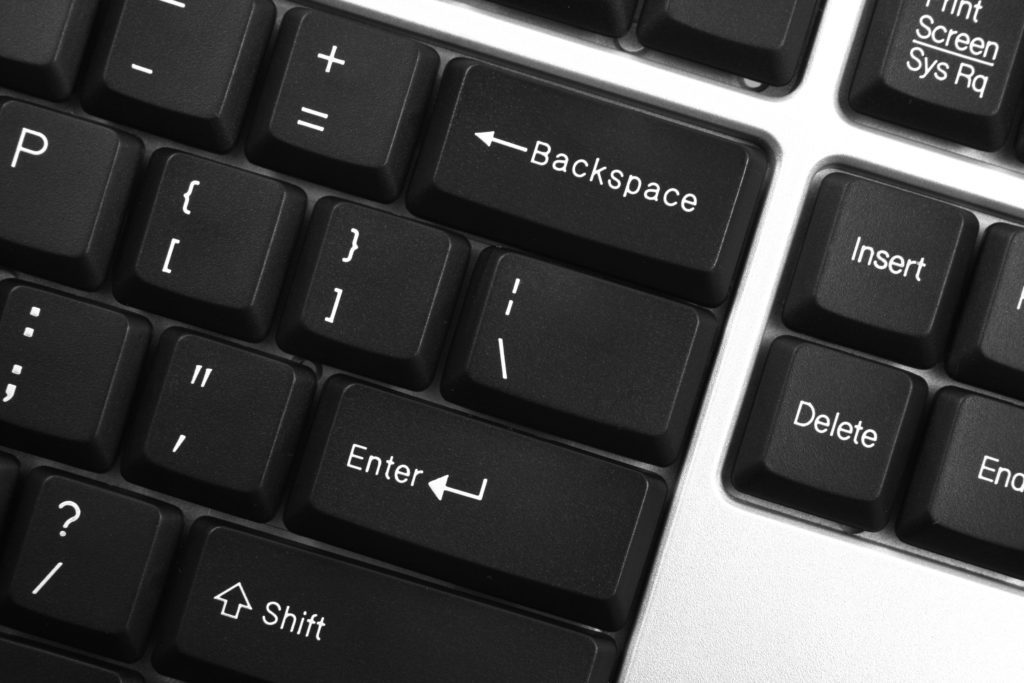

The program reads ASCII characters when they are entered via the keyboard. It then stores them in successive byte locations. Done.

What Are Examples of ASCII?

It’s often easier to explain ASCII with examples.

Seeing how ASCII symbols correspond to values that humans will understand is helpful in explaining the concept.

What is an ASCII example?

Here are some what is ASCII examples:

- A lowercase letter “i” is represented in the ASCII code by the binary 1101001 and the decimal 105.

- The lowercase “h” character is equal to 01101000 in binary and has a decimal value of 104.

- The lowercase “a” is represented by a 97 in decimal and 01100001 in binary.

- An uppercase “A” is represented in decimal by 65 and by 01000001 in binary.

What Is the History of ASCII? (5 Phases)

Understanding the historical significance of the ASCII code helps to understand its relevance today.

First, it’s important to recognize that ASCII finds its roots in the telegraph code.

In comparison to earlier telegraph codes, ASCII was developed to be more convenient for alphabetization purposes and sorting lists.

It also accommodated devices other than teleprinters.

#1 ASCII and the Telegraph

Before there were phones and the internet, the telegraph enabled long-distance real-time communication.

It transmitted printed codes via radio waves or wire.

ASCII code was first used commercially by Bell as part of a seven-bit teleprinter code for telegraph services.

Does the name “Bell” ring a bell?

You might associate it with Alexander Graham Bell, who is credited with inventing the first practical telephone.

He is also a founder of The Bell Telephone Company, established in 1877.

Bell subsidiary American Telephone and Telegraph Company (a name you might still recognize as AT&T) was a primary provider of telegraph services.

#2 Standardization of ASCII

So when was ASCII invented?

In the early days of computer technology, there were dozens of different ways to represent numbers and letters in computer memory.

This was, in part, because the size of a single piece of computer memory wasn’t standardized until the mid-1900s.

So, until the 1960s, different codes were used for computing.

IBM used one code, while AT&T used another code (Extended Binary Coded Decimal Interchange Code (EBCDIC), and the U.S. military used yet another different code.

It wasn’t very convenient, to say the least.

In the 1960s, engineers from IBM led efforts to establish a single code for communications, and work began on standardizing ASCII code for everyday use.

This resulted in the establishment of the American Standards Association’s (ASA) X3.2 subcommittee.

On October 6, 1960, the ASA subcommittee X3.2 met to standardize ASCII. If you’ve been wondering “who invented ASCII code?” there’s your answer.

The first edition of the ASCII code was published in 1963.

Following this initial meeting, 28 code positions were left with open meanings, reserved for future assignments.

ASCII was first used commercially by AT&T in 1963. It served as a seven-bit teleprinter code for AT&T’s TeletypeWriter eXchange, TWX, network.

#3 Commercial Use of ASCII

The ASCII code was revised in 1967.

At this point, the X3.2 committee made a number of changes.

For example, they added new characters, including the vertical bar and brace, and removed others.

The most recent update was made in 1986.

In 1968, U.S. President Lyndon B. Johnson decreed that any computer purchased by the federal government had to support ASCII.

This was a significant milestone, indicating a top-down transition towards ASCII code.

Later, in the 1970s, ASCII became common in widespread usage when Intel developed eight-bit microprocessors.

Finally, the primary competitor for ASCII, EBCDIC, was essentially retired in the 1980s.

By this point, the personal computer had been developed, allowing ASCII to gain greater relevance to the broader public.

#4 Development of Extended ASCII

As it is, the ASCII character set can barely cover all of the needs of the English language.

It lacks many glyphs and is way too small for universal use.

So, when was extended ASCII developed?

In The 1970s, computer developers already noticed this problem.

As they tried to develop technology for global markets, they found ASCII couldn’t cover everything.

For example, some European languages use letters with diacritics (like ä, ï, ö, ë, ÿ).

There are also other symbols and codes used globally, like “£” for the British pound (which then wound up getting confused with the American take on the pound, #).

In the 1970s, computers were standardized on eight-bit bytes.

By this time, it became evident that computer technology and software wouldn’t handle text using 256-character sets.

The result was extended ASCII.

This allowed for the addition of 128 additional characters or extended ASCII.

The developed eight-bit encodings covered primarily Western European languages, like German, French, Spanish, and Dutch.

Since then, many proprietary ASCII 8-bit character sets have developed.

Transcoding refers to the process of translating between these unique, specially developed sets and standard ASCII.

Despite attempts to coordinate internationally, proprietary sets remain the go-to solution in contexts where traditional extended ASCII proves insufficient.

#5 ASCII in More Recent History

Note that ASA is now referred to as the American National Standards Institute or ANSI.

In contrast, the X3.2 subcommittee is now referred to as the X3.2.4 working group, or the International Committee for Information Technology Standards, or INCITS.

ASCII was the most common type of character encoding used on the world wide web until 2007, at which point UTF-8 took over.

Unlike ASCII, UTF-8 is backward compatible.

How Is ASCII Used in Today’s World?

The information above covers a lot of material, from the ASCII meaning to the tables and the history of ASCII’s development.

So, who uses ASCII today?

Is it still relevant?

Absolutely.

As mentioned, however, ASCII has limits.

It’s difficult to extend to non-English alphabets, like Cyrillic.

This is an attempt to create a character table that can encompass everything, from African to Asian scripts.

Non-ASCII characters are covered.

What Is ASCII Used for Today?

To this day, ASCII serves as a standard computer language.

The basic principle remains the same:

ASCII uses codes from the ASCII table to translate computer information into information that people can read.

This character set helps computers and humans “communicate” since computers only understand binary, and humans only understand language.

Today, ASCII is the standard for almost any operating system.

When Is ASCII Used Today?

As to where ASCII code is used, the answer is pretty much everywhere.

Modern extended versions of ASCII keep it relevant, and it can even incorporate languages other than English as well as scientific, logical, and mathematical descriptions.

When a computer pulls up data, it checks the character’s number, for example, decimal 65.

It then translates that information to the corresponding character of decimal 65 (in the American English ASCII, which means a capitalized letter “A”).

For example, here’s your ABC’s as a computer sees it: “0110001 0110010 0110011”. That might look like gibberish to you, but to a computer, it’s obvious. It reads as “ABC” when seen on the screen.

Who Uses ASCII?

While you undoubtedly see the value of ASCII by now, you might still be wondering who on earth uses this stuff?

The answer is computer programmers.

Programmers trap keystrokes by character code and then decide what to do when individual keystrokes are pressed.

For business information systems such as for ERP or CRM too, obviously.

ASCII remains the base standard for such tasks.

What Are the Non-Traditional Uses of ASCII? (2 Uses)

ASCII is still ultimately about one thing—improving computers’ functionality and enhancing compatibility between different systems.

However, ASCII can also be used for some more, shall we say, frivolous purposes.

Read on to discover two fun uses of ASCII.

#1 ASCII Emojis

The English language has evolved a lot since the development of ASCII in the 1960s.

The way people communicate has changed, with more texting.

One of the most significant results has been the use of emojis.

Just what does ASCII do with emojis?

Can ASCII represent emojis?

Not in the way you might be envisioning.

The traditional ASCII table hasn’t been expanded to include an entire section for emojis, for example.

However, there are so-called ASCII emoticons that you can check out.

There is even an ASCIImoji extension for Chrome.

It lets you type in a word and get an emoji.

For example, if you type (bear), you see this on the screen: ʕ·͡ᴥ·ʔ

You can check out a full list here but beware, these aren’t all suitable for work (NSFW)!

So, can ASCII represent emojis?

Kind of.

This isn’t the traditional take on ASCII, however.

It follows a similar principle to how ASCII code works.

In this case, however, how ASCII is used to represent text is instead used to describe images.

#2 ASCII Art

Another modern use of ASCII is ASCII art.

This simply consists of images created by computers through the strategic positioning of code strings.

The strings of code are positioned in a way that they look like figures and drawings from afar.

Technically, ASCII art is a type of graphic design.

It uses computers to present pictures that are pieced together using ASCII characters.

Only 95 printable ASCII characters are used.

Similar to ASCII in general, ASCII art can be traced back to much older technology.

While ASCII finds its roots in the telegram, ASCII finds its inspiration in typewriter art, which uses a typewriter to draw a picture using precisely placed letters.

The oldest known examples of ASCII art were created by Kenneth Knowlton, a computer-art pioneer who worked at Bell Labs in the 1960s.

Since then, ASCII art has found a substantial following.

ASCII art isn’t just about aesthetics, however.

Like ASCII, it developed because of a practical need.

ASCII was invented in part because early printers didn’t have the graphics technology they do today.

Characters were thus sometimes used in place of graphic marks.

Additionally, bulk printers would often also use ASCII art to indicate the division between different print jobs easily.

Inserting a “graphic” made it easier for the computer operator to spot. ASCII art was also used in emails before images could be embedded.

Examples of ASCII Art

Some ASCII code art has become very popular.

You can take a look for yourself at famous paintings recreated as ASCII art online.

From Sandro Botticelli’s “The Birth of Venus” to Salvador Dali’s “The Persistence of Memory,” you will likely recognize quite a few of these creations—even when they’re “painted” using ASCII.

More ASCII Tables

If you’re looking for any other ASCII table than the complete ASCII master table above or as a PDF version below, then you’ll find it here.

All tables come as a PDF version as well:

- Complete ASCII to binary table

- Complete ASCII to decimal table

- Complete ASCII to octal table

- Complete ASCII to hexadecimal table

- Complete ASCII to HTML table