Here’s THE definitive guide to the history of information technology.

From petroglyphs (drawings on rocks) to steam-driven calculation machines to the first microprocessor.

So if you want a deep dive into the history of information technology, then this is the article for you.

Let’s jump right in!

THE Definitive Guide to the History of Information Technology

We live in amazing times. We have the ability to communicate with anyone, anywhere in real time and even face-to-face.

Scientists have the ability to complete computations faster than our grandparents ever thought possible.

We store the equivalent of whole libraries of information and data in equipment smaller than our thumbnail.

But, how?

How did we get here? Computers, smartphones, GPS didn’t just pop out of thin air. Or, did they?

No.

All of this extraordinary information technology we have today originated, believe it or not, from the first cave paintings and petroglyphs (drawings on rocks) found all over the world.

These drawings and their later innovations represent the desire of all humans to share our thoughts, record them, and even create representations of past events so we can pass on our understanding and knowledge to the next generation.

We all want to create a symbolic world that can travel through both distance and time. This is the essence of communication.

This is the foundation of Information Technology.

It may well be the mission of our generation to help our descendants to understand that technological progress does not necessarily imply unthinking rejection of what the past has brought us.

Henri-Jean Martin, The History and Power of Writing

In fact, to understand the past is to embrace the present with a greater understanding and appreciation not only of what we have, but also of those amazing problem solvers who laid the groundwork so we can exist at this amazing moment.

Are you ready to travel back through time to discover how they did it?

Let’s go!

Fundamental Problems to Solve

Cave Paintings and Petroglyphs

Problem to be Solved: Beginning of consciousness and symbolic thinking.

Humans came out of Africa about 70,000 years ago with the ability to communicate orally. But, as we all know, talk only goes so far.

What if we want to leave a message for someone or plan a mastodon hunt or share a story about a god or gods? How would you do it?

Drawings.

The oldest drawings found thus far date back to about 40,000 years ago.

The most famous are found in southern France and Spain, but they have also been found in Indonesia, the Amazon Jungle, Africa, and in places around the United States, for just a few examples.

Drawings are found on rock surfaces either in caves such as Lascaux in France or in rock outcroppings such as those seen at the Petroglyph National Monument near Albuquerque, New Mexico.

These are all types of parietal art—human made impressions or markings in or on natural surfaces.

All were created by either painting directly on the surface of the rock or incising (carving into) the rock.

There is no universal style, only a few common themes. Most depict animals or human interaction with animals, stencils of human hands, amorsend abstract patterns and geometric symbols.

Current scientific thinking: Scientists don’t actually know why cave paintings and petroglyphs exist.

What we do know are four very important things:

- The drawings demonstrate that humans had become aware of themselves as they related to their world and to others. Drawings demonstrate the desire to represent the human place in the world whether that be our relationship with other creatures, our place on the planet or within the cosmos. It was the beginning of consciousness.

- Ancient peoples saw writing as something that came to us from the gods. It was sacred and magical. It is something scientists call “sympathetic magic.” Some of these drawings may have been created by Shamans or other religious leaders to depict religious ideas or to honor or to request help from the gods.

- Many of the drawings around the world are found in places with large rocks which created acoustic opportunities—amplified sound and echoes. Could these people have been experimenting with sounds and using the drawings to show the connection between the subject depicted and the sound that represents it?

- Drawings represent humans first attempts at symbolic thinking. This is important because, remember, the characters we humans use for writing (including computer coding) are actually abstract symbols representing sounds or parts of a word or concepts (or instructions for computers).

Origins of Early Writing: Creation of Writing Through the Concept of Numbers

When all is said and done, the primary reason writing was created at all was because of the establishment of settled agriculture which lead to community living, government administration, and trade among different peoples.

Somehow they had to keep track of it all. And humans started this process early.

Archeologists have found notched bones, that is tallies or inventory records, in Africa dating as far back as 35,000 years ago!

Problem to be Solved: How to keep track of our agricultural harvest? How do we talk with or ask questions of the Gods? How do we declare ownership of something? How do we know if taxes have been paid to the king?

As far as archeologist have been able to determine, humans took their second big step on the road to modern information technology about 5500 years ago.

Humans developed systems not only to declare ownership of a storehouse (cylinder seals made of clay with a symbol on it to serve as a lock on doorways), they also developed number systems to record inventories, taxes, payments, and trade transactions—the beginning of mathematics.

In addition, many cultures wanted to ask their gods questions (Chinese Shang Dynasty oracle bones used in divination).

Or they wanted to increase their harvests by understanding the seasons such as Mayans tracking celestial activity and recording historical events.

In other words, humans wanted to record information; to create permanent documented memory.

But, how did they do this?

Well, at first, they created symbols.

For example, the Sumerians carved a “drawing” of an animal into wet clay, then placed tally marks beside it to represent how many of that animal or other product they had.

They were using various “pictographs” (picture writing) to convey meaning. Many cultures such as Mayan, Chinese, and Egyptian used a variation of pictograms early on.

As their documentation needs became more complicated and they wanted to track or describe more types of information, people developed numbering systems.

The Sumerians created a number system based upon the number sixty—from our perspective an unusual choice.

Yet, if you stop and think about it, sixty can be divided evenly in many ways with no remainders.

In fact, it was such an elegant solution that we still use their numbering system today to tell time—sixty seconds to a minute, sixty minutes to an hour.

We also use this system when we measure angles in a circle such as 60° or 360°.

And if you noticed that many measurements use the base of three like twelve inches to a foot you can blame it on those mathematically inclined Sumerians.

Yet, most early numbering systems in the rest of world were based upon the decimal system (that is 10s—the Mayans used 20s).

Most were formulated using symbols to represent key numbers, similar to what we call Roman numerals (I=1, V=5, X=10, C=100, D=500, M=1000). So 1999 would be written as MCMXCIX.

All number systems had some form of a positional format that could help with computation, but I suspect you could see how crazy it could get—sort of like some coding logarithms.

What made it even more challenging was they all lacked the concept of Zero, which led to all kinds of confusion. Was it 53 or 530?

Writing didn’t always mean mathematics. These people also wanted to explain more complicated ideas and to identify many things, not just a few commercial products.

But, simple pictures would not accomplish all the communication goals they had.

So, they also created symbols to represent sounds rather than actual items.

Some societies chose to break words down into syllabic sounds. This proved very helpful when trying to describe a proper noun like the name of a king.

And some writing systems used symbols to represent concepts.

A language like early Chinese used some pictograms as well as some ideographs (symbols for ideas), but most of the language, roughly eighty percent, used a dual system of two symbols with one representing meaning and the other sound.

So how did they do the writing?

One of the best known and oldest writing systems is cuneiform developed by the Sumerians, who lived in what is now southern Iraq.

They had lots of clay available to them and that became their primary writing medium.

They used a reed tool, often called a stylus, to incise (cut into) the wet clay with a wedge shaped mark with each one representing a word, idea, or sound.

Scientists call this type of writing cuneiform (wedge-shaped) writing.

The Sumerians wrote from right to left rather than left to right like Europeans do. And, as we learned above, the symbols could be quite detailed.

However, as time went on, these representations became more simplified to help speed up writing.

What is interesting to note is that this writing system had no punctuation. Basically, it was just long strings of characters.

To differentiate something important, that idea would be placed in a box or a cartouche to create a unit of meaning.

One must remember that finer points of modern writing such as paragraphs, punctuation, headers, titles, and so forth had yet to be invented.

Most early writing systems world-wide followed a similar pattern.

Harnessing Science: Tools to Technology

Problem to be Solved: With so much information to manage, societies had to develop methods to speed up writing, improve computational accuracy, and create systems to store records.

Tools—So Many Ideas

While the Sumerians used clay, most other societies used materials that were often a type of wood or grass such as birch bark, bamboo, papyrus, palm or agave leaves, and wood blocks as a writing medium.

Later on, people would use fabric (silk), leather, finely rubbed leather called parchment, or even metal. Sometimes these materials would be covered in wax and the writing would be done in the wax.

Other times an ink of some kind was used. These inks could come from pigments derived from minerals or plant materials or a charcoal-like pigment created from burning oil and wood.

And like with cuneiform, some writing would be cut into the material used.

Paper, as we know it, would be developed in the first century CE (the common era) by the Chinese and would spread like wildfire around the world with the help of Arab traders starting in the eighth century. But that is way into the future.

What is important to remember is the “shape of written signs depended on the materials on which they were written.” (Martin, 43) and the material used depended upon what was available to that society.

Writing systems became so standardized in Sumer and so necessary for conducting daily activity that lexicon lists (500 BCE—before the common era) were developed as well as schools to teach people how to read and write.

People needed this understanding to be able to read contracts and wills as well as conduct business such as purchase houses and land and have a record of cancelled loans.

People started to record poems and stories, with one of the most famous being the Epic of Gilgamesh (about 1300 BCE).

They wrote down laws. Many of you have probably heard of Hammurabi’s Code (1754 BCE).

Legal officials documented court proceedings. Kings gathered these material together to create great libraries (for example, the library of Ashurbanipal 600 BCE).

Common people stored deeds and other important records in their homes. Kings and other government officials sent written messages to their fellow kings as a form of diplomacy.

Basically, Sumerian society functioned administratively much as we do today and they could do so through writing.

Yet, writing with so many characters could be cumbersome. So, they found ways to simplify.

Probably one of the most famous of these processes is how our alphabet came into being.

While it did originate with cuneiform, which had about one-thousand characters, a slow process began to narrow down that number to represent just consonant sounds.

This occurred around 1500 BCE in several languages including Egyptian and Semitic-languages such as Arabic and Hebrew.

Scientists call this written language Proto-Canaanite.

The Phoenician peoples, who were great traders throughout the Mediterranean, took this process even further at about 1100 BCE when they created an alphabet that had only twenty-two letters, no vowels.

When reading this script, one just filled the vowel sound in.

This change helped scribes write faster.

Tools—So Many Numbers

The development of numerals lead to activities as varied as: taxation and governmental bureaucracy to manage it; architectural design and building plans as well as methods of keeping track of ownership of property (early surveying); the establishment of a common system of weights and measures; and, even banking, with merchants lending people money which required accounting systems to keep track of who still owed them money.

Banks, as we know them, hadn’t been invented yet.

Societies also found ways to speed up calculations and to improve accuracy with the invention of early calculators.

Sumerians developed a tool made of rods in a row with beads attached that could move along the rods. They called it an Abacus. Many of you have probably used one in school.

The Chinese developed a variation of this method with a tool called a Suanpan.

Both increased the accuracy in calculations involving addition, subtraction, multiplication, and division.

Yet, computations still proved annoyingly slow, complicated, and filled with errors. One of the big hurdles was working around long strings of characters that made up a single number.

However, Asian India would change that. Mathematicians there around 700-500 CE established the decimal system using nine distinct characters for numbers one through nine.

But, because they needed to hold a tenth space, they invented the number Zero to serve as a placeholder.

Arabic traders adopted this system and spread it to the rest of the world.

The Hindu-Arabic system transformed mathematics. It is the number system many of us use today.

Tools—Somehow They Need To Store All of This Information

Because people wrote so much all over the world, societies developed ways to keep like materials together—early data storage systems.

The Sumerians had it easy. Just bake the clay and they had a relatively permanent record.

Other societies took wood blocks, bark pieces, or clay tablets and bound them together with leather or some other material to create a triptych or an accordion shaped package of records.

Others, like the Egyptians and the Chinese, took their plant-based sheets—papyrus and bamboo respectively—and glued the ends together, then rolled it up to create scrolls.

Later, Romans took those same pieces, stacked them, then bound them together to create codices (codex) that opened like a modern book. The Chinese would do this too.

Early Technology—So Many Ideas

For the next 1500 years, this is pretty much where writing remained.

Basically, if you wanted a copy of a document or a scroll or codices someone had to copy it for you.

This was a long, meticulous process.

Yet, during the height of the Roman Empire, roughly 100 BCE to 100 CE, there was, believe it or not, a very brisk “book” trade available to those who could afford to pay a copyist.

And because it was humans doing the copying, there were lots of mistakes.

This system was unsustainable. There had to be a faster way.

So people experimented with molds to create multiple copies of documents.

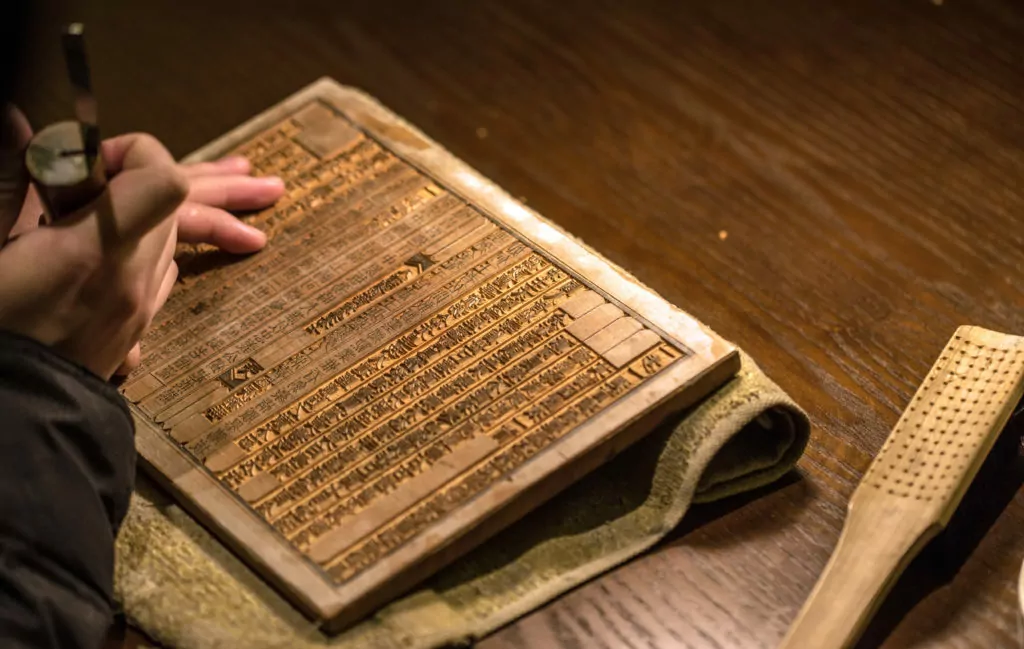

The easiest solution was to carve a wood block in reverse, ink it up, and press some writing medium onto it.

However, sometimes, depending upon the level of detail, the creation of the wood block would take as long as a scribe could copy the document.

The woodblock method worked best for the creation of items like playing cards in the West and for large, standard writing characters in the East. Yet, the blocks would wear out or break.

So, the Chinese experimented with using ceramics and metal which worked reasonably well.

In fact, as early as 971 CE the Chinese printed a large Buddhist text.

The Koreans did an even larger printing project of Buddhist texts (130,000 wood blocks) during the 1240s.

However, for European writing systems, a special type or block system had to be created and it would take some time to get there.

Many issues contributed to this delay.

The most important was a source of cheap and readily accessible material to print on.

For much of this transitional period, papyrus was the materials of choice, but it was expensive to produce and hard to come by because it really had only one true source, the Nile River Valley.

Europeans experimented with skins leading to creation of a material called parchment that was used for the next thousand years, especially for official documents.

Yet, it would take the invention of paper by the Chinese about 100 CE and its spread throughout the world to change everything.

Like with many things in the 1400s, Johannes Gutenberg, a goldsmith, was not working in a vacuum. His achievement required a confluence of several different developments to make it happen.

We also cannot forget that printing existed in other forms using metal presses to do things like the royal seal, hallmarks, signet rings, and even stamps used by bureaucrats.

But, his challenge was how to make a uniform, interchangeable type that would create neat, even lettering and be inexpensive to make.

He also had to determine the correct form of ink as well as the best method to apply it.

In addition, how does one create a press that would exert even pressure over the entire piece of paper?

Gutenberg was not the only person working on this problem.

Yet, through trial and error and learning from other people’s mistakes, he managed to figure out a way. His skill as a metallurgist was the key.

His 1450 invention led to more books being printed in the fifty years after the creation of his press than had been printed in the previous one-thousand years.

Page formatting that we all know today started to make their appearance along with the format we all know and love—the book.

The ability to share knowledge exploded upon Europe and had a major impact on the rapidity of scientific exploration and the development of important systems that led to computers and our modern information systems.

Early Technology—So Many Ways to Measure

While we can’t take the time to discuss all of the mathematic developments that occurred over these 1500 years—there were many—what we can do is talk about some of the key developments that helped improve navigation and with it computation.

- One of the very earliest tools was something that was created around 200 BCE called an Astrolabe. Its name roughly translates from the Greek as “star-taker”. And, in a way, that was almost what it did. It was a model of the universe and allowed users to take measurements to help solve questions in astronomy. It could also be used for navigation.

- A similar instrument, developed about 100 BCE, has been called the first computer—Antikythera Mechanism. It was a tool created by the Greeks to predict the movement of the celestial bodies in the heavens through the use of epicycle models, graphics, and other tools. It had scales, clockwork-like gears, and other mechanisms that make it look completely modern.

- The Chinese discovered magnetism of the earth very early, about 400 BCE, and actively used compasses in navigation as early as 200 BCE. The Vikings and other Europeans used compasses as well. For our proposes, knowledge of this force would be important later when scientists sought to not only understand how electricity worked, but also how to harness it.

The Birth of True Calculators

As humans explored their world in more depth, they increasingly used mathematics.

People looked to machines to aid in this effort to the point that in 1615, a new word “technology” came into common use. The abacus had become old technology.

Around 1500, Leonardo da Vinci—always ahead of his time—drew his conception of what a calculator might look like.

It was decimal-based with thirteen wheels, one for each of the ten digits with additional wheels to record hundreds, thousands, etc. It operated by using a crank.

Today, we call this unit the Codex Madrid.

It was never built during da Vinci’s life, but later mechanical calculators would look surprisingly like it.

Initially, scholars looked to written tables or logarithms to solve their computational challenges.

One of the earliest was created by John Napier and published around 1614. These tables helped people to visualize how numbers related to one another during activities such as multiplication.

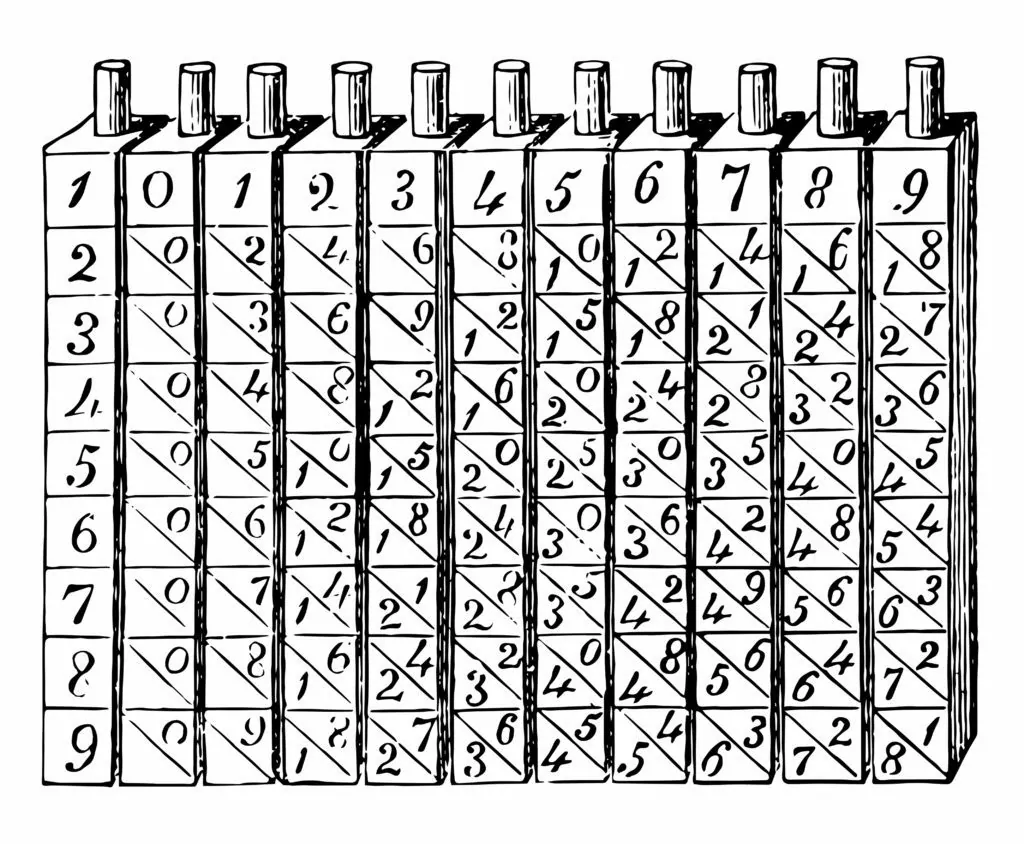

Three years later he created a tool using narrow rods with scales written upon them to make using the logarithm easier. These rods have been called “Napier’s bones.”

Other people picked upon this and improved it.

Edmund Gunter in 1620 developed scales that allowed the user to calculate equations without looking at a logarithmic table.

William Oughtred around 1622 invented the first slide rule, but it was circular in shape. He modified it in 1633 to create the rectilinear version that so many of us know.

Slide rules continued to be used well until the 1970s.

In fact, we got people to the moon in the late 1960s using slide rules.

Wilhelm Schickard took an early try to design a better mechanical calculator, what he called a “Rechenuhr.”

He shared his conceptual design in letters with Johannes Kepler around 1623. He claimed it could do basic calculations, even carry over numbers.

In one letter, he described how to build one. It was remarkably sophisticated.

Unfortunately, the one Schickard ordered built was destroyed in a fire so only the drawing exists today.

It took a young man, Blaise Pascal, to create one of the first modern mechanical calculators around 1644. His intent was to help his father manage tax computations more efficiently.

So at age 19, he started building a mechanical calculator that would become known as a Pascaline. It used eight digits with ten notches and could only add and subtract. It worked by inputting a stylus into a number and then turning a crank. It was cumbersome and never worked well.

However, Pascal made important contributions in mathematics, philosophy, and science—especially in our understanding of atmospheric pressure.

This latter contribution provided some of the foundational concepts for the creation of modern computers, as we will see.

Thirty years later, Gottfried Leibniz in 1673 started constructing another mechanical calculator called the “Stepped Reckoner”. He completed it 1694.

This system used gears and rollers with ten different sprockets powered by a crank to change numbers.

This machine could add and subtract as well as multiply and divide—sort of. It had a small, but important drawback—it couldn’t carry numbers, and thus never sold.

More importantly, in 1679, Leibnitz proposed a new counting system for math which used only two numbers to speed up computations—the binary system.

Like many of his generation, he saw it in terms of God’s grace—one represented God, zero the absence of God.

No matter how one thinks about it, the binary system would go on to serve as the foundation of many digital languages used in modern information technology.

On the surface, so many inventions from the seventeenth century may appear unrelated to modern information technology such as understanding barometric pressure or how to create pumps or even how to understand art and nature in geometric terms.

Yet, many of these ideas would lay the groundwork for what would become the modern computer.

Practical Applications

Problem to be Solved: How to manufacture textiles and other goods more quickly, efficiently while maintaining quality control?

By the eighteenth century, society looked to science for technology that could make manufacturing more efficient.

Scientists built upon discoveries about the nature of the heavens made during the fifteenth and sixteen centuries.

Those atmospheric studies lead to questions about how light and air worked as well as the possibility of harnessing barometric pressure and other pressure sources to power industry.

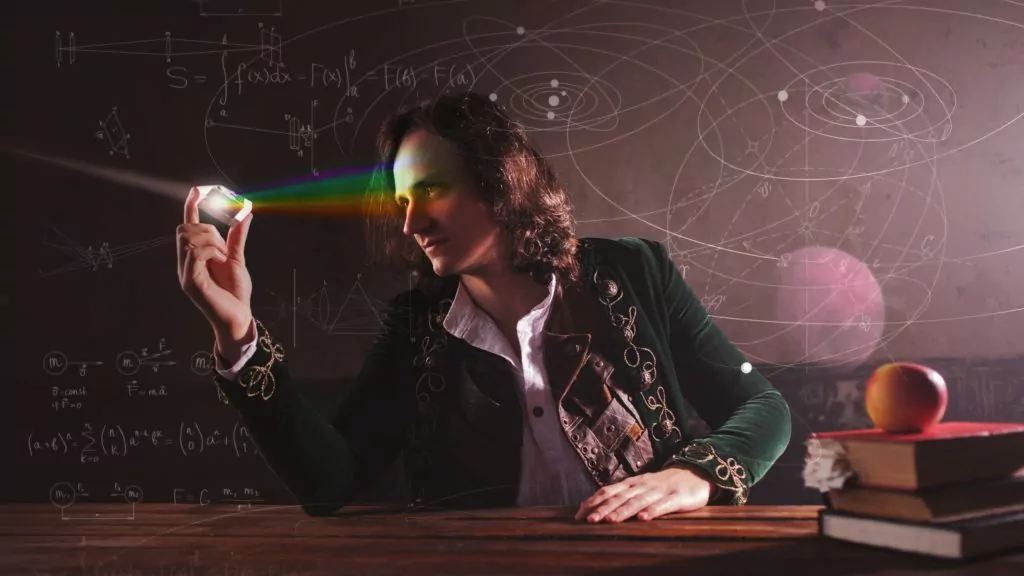

Issac Newton’s studies in optics in the seventeenth century led to a better understanding of how light worked and how we actually see it—color is light reflect back at us creating the sensation of color.

He also developed laws of motion that we still use today.

His ideas helped other scientists realize that matter functions under particular controls and we needed to study those functions if we want to use them.

Scientists also began to realize important things about the air; that the amount of air in the atmosphere was finite.

Fluid studies led to ideas about atmospheric pressure and suction.

They began to understand that air flowed like water and just like water could move things under pressure or pull them through suction.

These studies lead Captain Thomas Savery to create a “fire-engine,” first demonstrated in 1699.

It’s not what you think. Basically, it was an engine fired by steam.

Water would be boiled by heating—i.e. by fire—which, of course, created steam. This steam could move a piston either lifting it or sucking it back. In essence, he created a mechanical pump.

Thomas Newcomen built upon this idea creating what is considered the first atmospheric engine in 1712. He became an important figure in the study of thermodynamics.

Others, such as John Smeaton, took these innovations even further around 1760.

And, you guessed it, these efforts lead to James Watt’s creation of the double-action steam engine in 1784.

With these developments, machines could run faster and more efficiently than ever before. So, the next challenge for scientists became:

How do we improve quality control for manufacturing?

How can we create consistency in the products being manufactured for the mass market?

Scientists looked to ways to communicate with the machine. This became especially important for the fabric industry.

How do we tell the loom to replicate the same pattern over and over again?

This was not a new question. Several people had tried to develop a system before now.

All looked to something familiar, something that had been common place since the fifteenth century—clock mechanics.

Basically, clocks had an automatic control system that used a cylinder with pegs attached to it.

These pegs would activate an intended action, such as sounding the hour, at the prescribed moment by hitting an appropriate lever. The placement of these pegs ensured an accurate measures of time.

One of the first people to create a possible functioning loom control system was Jacques de Vaucanson.

Around 1750, he constructed a metal cylinder with a sheet of paper with holes in it where hooks were placed.

As the cylinder rotated, the hooks would lift the threads in the loom at the appropriate time to create a replicable pattern.

While a technically sound system, it was expensive to create and it was difficult to switch out the cylinder for another pattern. Even so, it was a step in the right direction.

De Vaucanson would become better known as one of the first inventors of true robots.

His vision for an automated loom would have to wait another fifty years.

In 1801, Joseph Marie Jacquard created a series of punch cards using the binary system similar to the one Leibnitz developed.

The idea was that one row of holes matched one row of the fabric. The loom would know which thread to use or not to use based upon sequence of holes in that row.

It was ingenious and led to the full automation of looms. It also offered clues about methods for programming machines into the future.

Electricity Lends a Hand

One thing was for certain: by the end of the eighteenth century many of the foundational scientific principles that the laid groundwork for future information technology had been discovered.

Now those amazing problem solvers needed to refine what they had learned.

Energy generation became the most important question going into the nineteenth century.

For most of humanity’s existence we used water, some type of material that burned, or pure strength of humans or animals for our energy source. One can see easily that those source had their limitations.

With the advent of steam engines, coal was burned to generate steam.

Others experimented with other fossil fuels. And some looked towards electricity.

It was Alessandro Volta who learned how to harness electricity through his experiments with electrical charges.

He even created a machine to produce static electricity. From there, he continued his experiments with atmospheric electricity which led to an important discovery.

He realized that placing copper and zinc plates separated by cardboard into brine could create an electrical current.

This set up would become known as a “Voltaic Pile”—the first electric battery.

With this discovery, first demonstrated in 1801, the electrical age had begun.

Problem to be Solved: How do we take technology and scientific know-how to create systems that allow us to compute and to communicate better and faster?

Many people worked on ideas to improve computation and communication throughout the nineteenth century and sadly we can’t discuss everyone here.

Even so, it is important to know that this was a vibrant, exciting time for technological development that evolved into what we now call information technology.

Electricity took center stage in all of these inventions.

Very early in the century, four people would make remarkable discoveries and inventions that would create a field of study called electromagnetism.

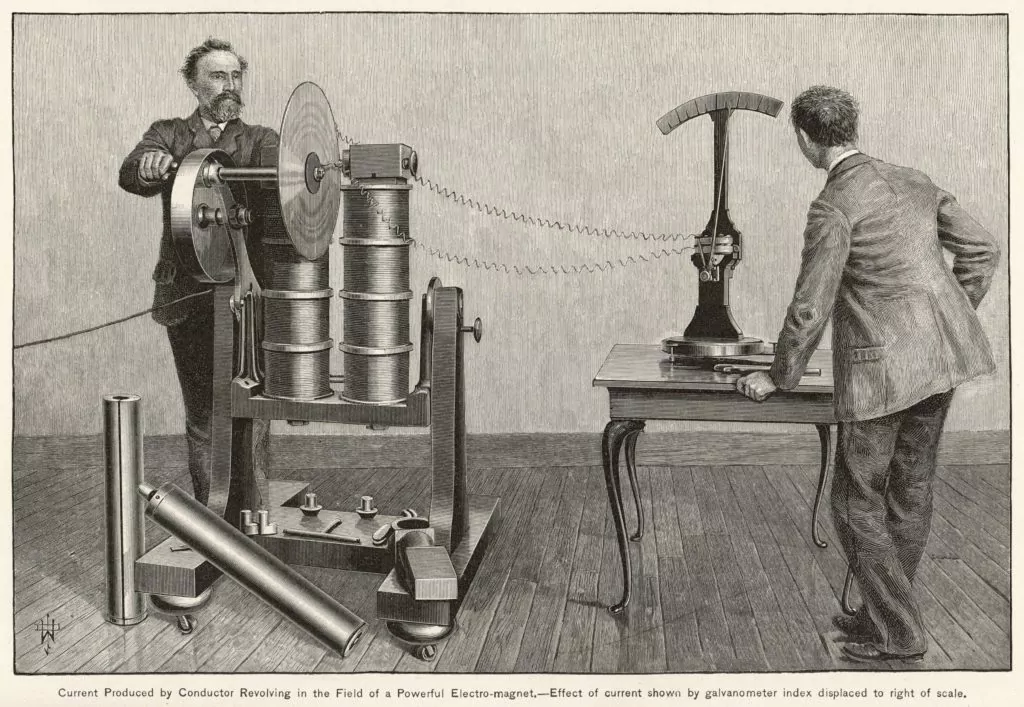

- In 1820, Hans Christian Oersted discovered during an experiment that if you run a wire that contains an electrical current near a magnetic compass the needle would move away from true north. This lead to the creation of a galvanometer that could detect small amounts electrical current.

- Andre-Marie Ampere developed theories, including Ampere’s law*, that addressed the relationship between electricity and magnetism. He also devised a system to measure the intensity of electrical current that we still use today—the ampere or amp. He published his findings in 1826. Ampere suggested that it might be possible to create telegraphy by placing small magnets at the ends of wires.

- William Sturgeon in 1825 developed an electromagnet. While it doesn’t sound all that impressive, this remarkably simple tool consisting of multiple turns of copper wire—an electromagnet—permitted electricity to be included in the design of machinery. His discovery would prove to be a key component in telegraphy.

- Michael Faraday changed people’s view of electricity. In 1831 he discovered the phenomenon of electrical induction. He learned that there were not multiple electrical currents, as previously thought, only one. He determined that changing magnetic field induced a current, but a steady magnetic field does not. He also developed the idea of “lines of force” that lead to the invention of a “dynamo”—an early electrical generator.

Theories related to computation also took gigantic leaps at this time.

If anyone deserves the title of father of the computer, it should be Charles Babbage.

This epitaph does not come to him because of what he accomplished so much as what he envisioned.

In the early 1820s, he was able to obtain funding from the British government to create a steam-driven calculation machine called the “Difference Machine.”

It would permit the calculation of tables by a “method of differences.”

Yet, while he was building this machine in 1837, he got another, more brilliant idea—the Analytical Engine.” It was his glorified calculator one better.

This “Engine” would have the ability to “store” data and would have a “mill” that would serve as the calculating and decision making component. These units would be built out of thousands of cylinders.

The goal was to create a machine that could compute multiple algebraic equations at once. The “mill” would be programmed through punch cards similar to those develop by Jacquard.

Babbage also had a friend, Augusta Ada Byron King, the Countess of Lovelace, who was a brilliant mathematician in her own right and saw quickly into what Babbage was trying to do.

Then, with her active, creative, logical mind she began to see beyond.

She envisioned a machine that could perform “operations,” that is “any process which alters the mutual operations of two or more things.”

She saw Babbage’s machine performing these operations beyond calculation. It could also address “symbols” and “meaning.”

The example she offered in a letter to Babbage in 1843 was a machine that could compose a piece of music using representations of pitch and harmony.

She created a set of rules for this process to take shape; something that many of us today would recognize as an algorithm.

Her core idea in all of this was something they called a “variable.”

Using an idea first developed by Jacob Bernoulli about rational numbers, often called Bernoulli numbers, she felt that this system could be translated to what she called variable cards; what many of us would call punch cards.

What was truly extraordinary about all of this was she was already programming an incredibly powerful calculator and information processor before any such calculator or processor actually existed.

Babbage never build the Difference Machine so she had nothing physical to work with.

It was all done in her head.

But, because of her ideas, she became known as the first programmer.

So, why does Babbage matter?

If one knows how the very earliest computer like ENIAC worked, one will instantly see the storage place for data and the “mill” that connected different processors and modulated each processors’s findings into a final answer.

What was so eerie about Babbage’s system was the use of punch cards for inputting data—to instruct the computer.

Like so many ideas that came before their time, the idea would continue to bounce around in people’s heads until the time was right and the idea was manifest.

Babbage’s dream of a mechanical brain was ahead of his time.

Another great thinker ahead of his time was a mathematician and philosopher named George Boole, a contemporary of Babbage.

Boole agreed with Gottfried Leibniz’s ideas about logic. That is, he didn’t consider it to be a branch of philosophy, but instead as a discipline of mathematics.

In 1854, his book An Investigation of the Laws of Thought he presented the idea that logic could be broken down into algebraic equations, and used algebraic equations to illustrate his point.

Basically, these equations manipulated symbols where those symbols could represent a group of things or statements of logic.

The whole outcome hinged upon the answer to an equation would have to be either true or false; basically a binary response where the answer was either 0 or 1.

Eighty years later a number of people studying ways to communicate with computers through binary code made this connection and used Boolean logic to conduct computer operations.

Other fields such as library science also used this concept to create Boolean Search systems.

Telegraphy, the grandfather of radio, also made its appearance around this time. And as with so many inventions, it came about through multiple discoveries by several people.

During the late eighteenth century, many attempted to send impulses of electricity via wire over long distances.

Georges-Louis La Sage (1774), Francisco Salva y Campillo (1804), and Samuel Thomas von Soemmerring (1809) all tried to send electrical impulse across wire, but none of these experiments traveled further than the next room.

However, Sturgeon was able to prove definitely that impulses could be sent over wire using his electromagnet.

A Russian, Baron Pavel Schilling, also did a similar experiment in 1832.

However, American Joseph Henry demonstrated this principle resoundingly in 1831 by sending an electrical current nearly a mile on wire that managed to ring a bell at the other end—the world’s first electrical doorbell.

However, it would become a competition, in essence, between William Cooke and Charles Wheatstone in England and Samuel Morse and Alfred Vail in the United States over who would create a system that actually worked and would gain ascendancy over the other.

The English team developed a method that used electricity which powered five magnetic needles pointed at a panel of 20 letters with corresponding numbers.

However, the panel did not have the letters C, J, Q, U, X or Z. What the operator would do was push a button related to the number that corresponded to the letter of choice.

The person on the other end would see two needles point to the letter on a triangular panel and write down that letter and then waited for another. Cumbersome to say the least.

And, it took some time to operate, around two seconds for each letter.

However, when telegraphy really took hold in the 1840s, the Cooke-Wheatstone system was used as the key communication system along the railways throughout Great Britain.

The Morse-Vail system proved much simpler.

They developed a way to send and receive an electrical impulse over a single wire.

Vail invented the telegraph “key” that would make their system so efficient. Morse patented this invention in 1837.

A year later they provided a unique language that used a system of dots and dashes to represent letters in the alphabet—what we now call Morse Code, although some would argue it really should be called Vail Code.

Other coding systems failed because they used cumbersome wire set ups, such as one wire per letter in the alphabet.

The Morse-Vail system’s use of only one wire made Morse telegraphy and accompanying code simple and inexpensive to use; and thus, ready for adoption by an eager market.

By the 1840s, both systems were in common use in their respective countries, yet it would be the Morse-Vail system that would eventually win out because of its simplicity in design and ease of function.

And, it is important to note here, that telegraphy was not one invention that spread, but several different inventions in several countries with one system, the Morse-Vail system, coming into greater use.

Over the next few decades there would be many other ingenious innovations that would improve upon these systems providing greater efficiency and flexibility.

As we have seen before, invention is a gradual building upon other people’s ideas.

With the telegraph, electricity created a world where distance communication became that much easier.

Problem to be Solved: Now that we have found a way to control electricity, how can we light houses? How can we transmit sound? How can we speed up calculations?

The next big challenge was how to transmit sound over long distances.

It was well know that sound was a wave and it could travel through solid material such as a wire. Most of us have done a variation of a cup and string experiment to prove this point.

The real challenge became how to amplify that sound and then transport it long distances. Lots of people looked into this problem.

One key player was an Italian, Innocenzo Manzetti, who developed an idea to get a voice to carry in 1843.

He would go on to build a device to do this in 1864, calling it a “speaking telegraph.” Sadly, he did not pursue a patent. If he had, he may have been called the inventor or the telephone.

Another Italian, Antonio Meucci, in 1856 actually developed a device that sent sound from one floor to another in his house.

John Philipp Reis created a machine that transmitted a voice one-hundred meters in the early 1860s.

In 1874, a man by the name of Elisha Gray transmitted musical tones over wire.

Yet, the most famous was Alexander Graham Bell. And, as with most important inventions, the inventor was looking into something else when he stumbled upon his idea. In 1871, he was investigating how to transport multiple messages at the same time, called harmonic telegraphy.

Yet, the idea of transporting the human voice became his all consuming passion.

The key would be the invention of a transmitter and a receiver. He and his partner, Thomas Watson, investigated how the human ear functioned—a vibrating diaphragm that converted sound into an electrical impulse.

The receiver would detect the electrical impulse in the wire and convert it back into sound waves so one could hear it through a loudspeaker.

While there were a lot of people working on this issue and could reasonably claim that they discovered the telephone, it would be Bell who received the patent in 1876.

Now that we could transmit voice messages by wire, how can we do it now without one?

Wireless became the next big scientific hurtle to overcome.

This is where all of those fifteenth through eighteenth century experiments regarding waves and the atmosphere pressure would become important.

Both Michael Faraday and James Clerk Maxwell would lead the way.

Faraday realized that light had electromagnetic qualities and could move through air.

In 1839, he presented a theory that electricity produced tension in objects it passed through. As the tension builds, the pressure had to go somewhere so it moved through the object. Thus, electricity could move through an object like a wave.

This led the breakthrough concept of a magnetic field.

His other experiments presented important concepts, such as electromagnetic induction, that would provide the solution years later for how to amplify sound.

Maxwell, in the 1850s and 1860s, discovered ways to create tension within an electromagnet current that could permit transmission of that current.

He also determined that if the current pulsed at a higher frequency there was less resistance.

He insisted that these electromagnetic waves, radio waves, could be detectable.

However, it would be Heinrich Hertz who would pull their ideas together. In 1886, he developed a detector for high frequency oscillations, called a resonator. He also determined that the radio waves could be projected into space like light and heat.

One of the first wireless communications actually occurred in 1865 on a mountain in West Virginia.

A dentist named Mahlon Loomis flew a kite that had a large copper plate on it. That kite was connected to a galvanometer. Eighteen miles away there was a similar setup. When one of the kites was excited by electricity the other could “detect” it.

However, it would be Guglielmo Marconi who would take all of these ideas to develop a system that could send telegraph messages wirelessly.

His system had both a transmitter and a receiver that used elements from various scientists working on the same project. His first transmission was in Italy in 1895.

While Marconi’s system was imperfect because it often suffered from atmospheric disturbance, his system showed his scientific colleagues that their theories actually did work when combined in a particular way.

He would continue his experiments and receive a patent in 1899.

In 1901, he would conduct a transatlantic test that proved successful. Wireless telegraphy made the world even smaller and would permit the development of radio.

Other important inventions during this time included Thomas Edison’s light bulb first demonstrated in 1879.

He then created the Edison Illuminating Company in 1880 to not only produce electricity using water-powered generators and turbines, he also developed a distribution system as well.

By 1882, New York City would become one of the first electrified cities in the world.

His electrical systems would spread throughout the United States and to the rest of the world, evolving as it went. Electricity became a dominate energy source.

The world of computation also evolved. One of the earliest, the Arithmometer, was created by Charles Xavier Thomas, who received French patents in 1820. It performed basic computation.

About thirty years later he restarted its manufacture in 1851 and it would become one of the most successful calculators and remained in the market until 1915.

Two brothers Pehr-Georg and Edvard Scheutz worked to improve calculation tools.

One of their key inspirations was Babbage’s Difference Machine. Building upon his ideas, they created their own difference machine making it commercially available in 1853. One of its most unique attributes was it could print results.

Another important innovation occurred in the 1870s on both sides of the Atlantic with the advent of the pin-wheel calculator of Frank Baldwin in the US and of Willgodt Odhner in Sweden.

A pinwheel system meant it had a set of rotors or wheels with retractable pins that acted like gear teeth to control how the wheels rotated based upon the numbers entered.

It was a very popular design until 1884 when Dorr Felt developed a new kind of calculator that worked by pressing keys, which was called a key-driven calculator. He called his new system a Comptometer. It would remain popular until the 1950s.

And in 1891, William Burroughs would commercially manufacture his adding machine that used a keyboard and a printer. It was this adding machine that would set the standard for adding machines and calculators well into the twentieth century.

Other developments in printing and communication also occurred in the second half of the nineteenth century that would become significant to computer and information technology. Almost as soon as telegraphic technology had been established, inventors sought ways to print messages.

After several false starts, one of the earliest successes was a system created by Royal Earl House in 1846 which used a piano keyboard of twenty-eight keys representing letters in the alphabet with a shift key for alternative characters like punctuation.

The printer functioned similar to a daisy-wheel printer system. It worked as long as the time of the sending and receiving ends were synchronized. It got messy if they weren’t.

In 1855, David Edward Hughes improved upon this system, making operations of the printing mechanism more efficient to the point that a young American company, then called the American Telegraph Company, later Western Union, would chose to use this system.

In 1867, Edward A. Calahan, an employee of the American Telegraph Company, invented ticker tape to be used to help transmit investment and stock market information faster than ever before.

A significant development occurred in the 1870s with the invention by Frenchman, Emile Baudot, who in 1874 invented a code that permitted multiple transmissions and printing operations at a time, often called a five-unit selecting code system.

For many, information now could be conveyed in real time through telegraphy and printed to paper.

Two brothers made additional contributions to the future computer technology—James Thomson and William Thomson (Lord Kelvin).

While the latter brother is more famous because of his work in thermodynamics and more specifically for the absolute temperature scale he proposed in 1847 that bears his name (the Kelvin Scale), both brothers made significant contributions to the field of computer development.

Lord Kelvin created a shipbound “floating laboratory” which, using a wire, could take depth soundings of the ocean bottom and record the information. The system aided in predicting the tides.

His brother created a wheel-and-disc integrator that was used to solve simultaneous linear equations. They called it an integrating machine. It was one of the first analog computers created and would serve as a model for future systems called differential analyzers.

Lord Kelvin also achieved fame by developing a method to lay transatlantic cable across the ocean floor in the 1850s.

Yet, probably one of the most critical invention for information technology occurred towards the end of the nineteenth century when the inventor, Herman Hollerith, saw how long it took the US government to process the 1880 Census data—eight years.

Because of the massive growth in population, government officials feared that it might take even longer for the 1890 Census.

So he develop a calculating machine that took Babbage’s and Lady Lovelace’s punch card idea to help speed data entry into the calculator. And it worked!

It took only a year to fully tabulate the 1890 census.

Hollerith then founded the Tabulating Machine Company that would evolve into the International Business Machines Corporation, better known to most of us as IBM.

Innovation

After the dawn of the twentieth century, telegraphy, radio, and computation took extraordinary leaps in technological development.

Sadly, many of these improvements were rooted in war time efforts.

As several historians have said, war is good for business. By the same token, it is also good for technological development. Electricity would power everything.

Problem to be Solved: How do we communicate more directly at greater distances? How do we create computational systems that do functions faster and greater than ever before? How do we manage electrical power?

Although, scientists now understood the fundamentals for transmitting not only wireless signals, but also voice signals over wire. The real challenge became how to combine the wireless with voice.

The first big step in that direction came in 1906.

Canadian Reginald Aubrey Fessenden had been studying telegraphy to aid in weather forecasting.

In 1900, he managed to develop a method that made it possible to attach low-frequency sound to high frequency wireless signals that could be recovered by a receiver that upon receipt of the those signals would separate them.

It became known as amplitude modulations or AM.

He was able to transmit a voice for a mile using this method.

After a few years of perfecting this system, he did a demonstration on Christmas Eve 1906 where he was able to transmit music and read bible verses.

The age of radio had begun, but it would not become commercially available until the 1920s.

An innovation and variation on this system that captured radio signals that bounced off of solid objects would come into use during World War II, the Radio Detection and Ranging system, known to most of us as RADAR.

One key development at the turn of the twentieth century that would have real importance to future computers was the creation of a teletype system in 1906 by a father and son team, Charles and Howard Krum.

Their method used a stop-start synchronized printing system that permitted effective telegraph printing. They partnered with the Morton Salt Company, calling themselves the Mortkrum Company, which took the new printing system to market in 1910.

Six years later Edward E. Kleinschmidt developed a system using a typewriter-like keyboard with a type bar page printer. He then merged with Mortkrum after several lawsuit attempts.

In 1928, the company become known as the Teletype Company.

Teletype would dominate mass communication until the 1960s.

It created innovations not only for wartime communications, but also for journalistic communications through outlets like Associated Press, weather forecasting and reporting, improved railroad operations and communications, law enforcement operations, Red Cross logistics, and many other areas where distance communications were required.

Both full sheets as well as ticker tape width paper could be used on their various printing systems throughout their years of operation.

The teletype keyboard that evolved from Kleinschmidt’s invention was used as the main input and output tool for computer programmers and early personal computer users.

And, it would be on teletype tape that one version of the new computer programming language BASIC (Beginners’ All-purpose Symbolic Instruction Code) was written on 1964.

The 1920s also saw the development of early television.

The first steps occurred back with Faraday’s and Henry’s work with electromagnetism in the 1830s. In 1862, Giovanna Caselli transferred the first image over wire via a tool he called a Pantelgraph.

Scientists started experimenting with selenium as a way to transfer images electronically.

Additional theories were tested.

In 1884, Paul Nipkow developed a rotating disk system that could send images over wires electronically. He called it an “electric telescope.”

At the 1900 World Fair in Paris, the word “television” was first used and various inventions were demonstrate there.

In 1906, Leon de Forest invented the Audion tube used to amplify signals—the beginning of vacuum tubes used for communication purposes.

Boris Rosing in 1907 and Campbell Swinton in 1908 experimented with cathode ray tubes to transmit images.

In 1923, Vladimir Zworykin received a patent for his kinescope picture tube and in 1924 for his iconoscope camera tube. He received a patent for color television in 1929.

Philo Farnsworth would file for a patent in 1927 for his complete television system he called the Image Dissector.

Television broadcasts would begin in the 1930s with one of the very first broadcasts occurring in Germany in 1935, with some smaller efforts as early as 1930 in the United States and Great Britain.

Television would not become common place in people’s homes until the 1950s.

Key Innovation: Vacuum Tubes!

Remember all of those atmospheric and barometric pressure studies conducted by experimenters starting in the fifteenth century that lead to the discovery of vacuums? Well, vacuum tubes are their progeny. Basically a vacuum tube controls the flow of electrons between two metal electrodes sealed in either a glass or ceramic container where there is a vacuum. These tubes could do many jobs such amplifying a weak electrical current, generating oscillating radio frequency, adjust current from direct current to alternating current, and many other purposes.

Although a variation on this concept had been developed by the late seventeenth century, it did not come into common use until De Forest’s creation in 1906.

These vacuum tubes became ubiquitous in electronic devices throughout the first half of the twentieth century until Plasma, LCD and other systems revolutionized electronics starting in the 1960s.

The first computers used vacuum tubes as the basic components for its circuit system and memory for the CPU (Central Processing Unit).

Most of these computers used punched cards to input and store data externally.

They also used rotating magnetic drums for internal data storage in programs written in machine language (instructions written as a string of 0s and 1s-Binary Language) or assembly language, allowing the programmer to write instructions in shorthand translated by a different program called compiler into a machine language.

For computers, several important innovation steps occurred in the 1930s.

In 1931, Vannevar Bush, who was working at Massachusetts Institute of Technology (MIT), developed an improved electromagnetic differential analyzer that was built for general purposes rather than specific actions, such as the systems created by the Thomson brothers back in the 1840s and 50s.

It would have many similar functions such as wheel and disc integrator, but could solve a myriad of mathematical questions using multiple “black boxes” that worked together and provided feedback.

These boxes would be connected by placing plugs into sockets on a panel, a switchboard.

Later computers would use this method throughout the 1940s and 50s. However, it did not use vacuum tubes, but it did run on electricity making it an electromechanical machine.

Other important steps with electromechanical calculators occurred around 1937.

George Stibitz, a mathematician working at Bell Telephone Laboratories and the person who coined the term “digital” in 1942, would build upon the idea of using a switchboard to control electrical current using a binary system, what he would call a “breadboard” circuit.

Basically, the system could count in binary form based upon the flow of electricity.

While flip-flop, that is either 0 or 1, was not new to electronics (it had been around for decades) it did transformed how electricity could be used to run calculations. This would be an important innovation for the development of computers.

The resulting Model 1 Complex Calculator, although not considered a true computer, would become first electric digital calculator for Bell Telephone Laboratories.

Howard Aiken, a physics instructor at Harvard, believed—a mistaken belief—that electromechanical computers would be the wave of the future because he liked machines that could be adjusted mechanically rather than electronically as through vacuum tubes.

In 1937, he presented his proposal to create something he would call the Automatic Sequence Controlled Calculator, also known as the Mark 1, that in his mind would be “Babbage’s Dream Come True.” (McCartney, 27)

His machine would be programmed by a long strip of paper tape and would compute sequences directly.

In addition to these components that harkened back to Babbage, this behemoth machine, weighing five tons, used wheels with ten digits on them, had 750,000 moving parts, and everything was connected by a drive shaft.

It’s primary innovation was it could run continuously. And while it did manage to do difficult calculations, its speed corresponded with its large size making the calculation process unbearably slow.

It’s importance to the history of computing was not so much its calculation ability as its use in demonstrating the potential for computers to the general public such as its big unveiling that occurred in 1944 and the fact that several key innovators would get their start working on this computer.

Also, in the 1930s, two men on opposite sides of the Atlantic investigated the feasibility of using vacuum tubes in computers.

One was Thomas Flowers, in Great Britain, who in 1935 wanted to develop a way to improve the efficiency in telephone exchanges. He experimented with using thousands of vacuum tubes for this purpose.

In 1939, he managed to develop an experimental digital data processing system. He did not apply this process to calculation, yet he realized the possibility was there.

In the United States, John Atanasoff in 1937 conceived of an idea to use vacuum tubes to facilitate linear algebraic calculations.

Starting in 1941, he, along with his student assistant Clifford Berry, built a special purpose all-digital electronic machine powered by vacuum tubes that could solve linear algebraic equations.

It became known as the ABC or the Atanasoff-Berry Computer.

However, this system never worked well, but it did work and components of it served as inspiration for John Mauchly, one of the creators of the ENIAC computer.

Yet, the man who truly created the concept of what would become a modern computer was Alan Turing.

In a lecture presented at Cambridge University in 1936, he created the foundational concept of what modern computing could be.

Turing described the possibility of a digital machine that consisted of unlimited memory that would have the ability to scan and analyze data in its memory to find and then write results.

It would be able to store programmed instructions as well. It would not work on one type of problem, but many easily. And, because it would be fully electric, it could complete calculations that much faster.

It was one of the most sweeping presentations of the possibility of creating a universal machine; a machine capable of processing any type of information or data.

It became known as the Universal Turing Machine or Universal Machine.

This visionary concept would lay the foundation for future technological development.

Unaware of Turing’s ideas and other developments around the world, Konrad Zuse in Germany created the Z1 computer, an electronic calculator that could be programmed using binary code punched on 35-millimeter film.

It didn’t work well.

So his next try, the Z2 used electromagnetic relay circuits which improved operation.

But, it would be the Z3, unveiled in 1941 that would provide the world a key innovation.

With its use of magnetic tape that could be stored externally from the computer, the Z3 became one of the first functionally programmable computers that used an external rather than internal source for the programming.

This was an important innovation and proved very similar to Turing’s 1936 vision.

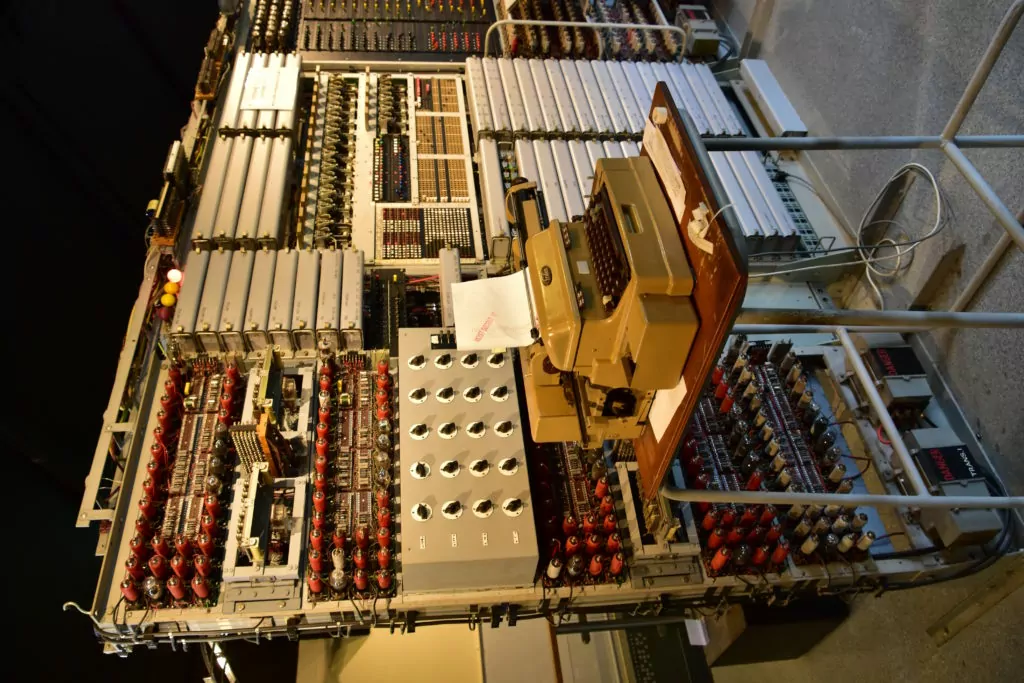

The first, fully-functioning electronic digital computer called Colossus was built in Great Britain around 1943 as part of the effort to decipher German radio communications coded through the Enigma Machine. It was fully successful in this effort.

While not a general purpose machine, it did prove the feasibility of Thomas Flower’s idea of using vacuum tubes for computation.

To parallel the British achievement, the Americans had their first fully-functioning computer in the US.

It was originally built to calculate missile trajectories starting in 1943.

While ENIAC (Electronic Numerical Integrator and Calculator), would not be used during the war, being unveiled in 1946, its development would have long-lasting implications for the advancement of modern computers and information technology.

Two men, John Mauchly and J. Presper Eckert, conceived and built ENIAC at the University of Pennsylvania. Their objective was to create a machine that could perform calculations intended to determine the trajectory of a fired shell and to do it faster than the cannon could fire.

Both Mauchly and Eckert explored options and made some critical determinations.

Basically ENIAC was designed with three main functions combined in one machine—computation, memory, and a “programmer” very much like Babbage’s “mill” where everything would be coordinated within the machine.

However, unlike the Mark I, they used vacuum tubes based upon what Mauchly saw in the ABC Computer.

Programming proved tedious because one had to literally shut the system down, reconfigure the wiring on the plugboard used for programming, and then start it back up. A definite handicap if one wanted to do a variety of calculations.

This situation harkens back to De Vaucanson’s challenge.

One scientist called ENIAC a bunch of Pascalines all wired together to help explain just how cumbersome the whole system was. It filled a 20-by-40 foot room in a “U”-shaped configuration. Yet it worked and served as a model for the next generation of computers.

After the war, Mauchly and Eckert started their own company to begin work on EDVAC (Electronic Discrete Variable Automatic Computer) in 1946; completing it in 1949.

It built upon ENIAC with innovations such as the ability to store computer programs on magnetic tape rather than having to reconfigure everything with each program change, as before.

It was designed to receive instructions electronically, keep programs and data in its memory, and used its memory to process mathematical operations.

These were major innovations.

However, British scientist Maurice Wilkes completed the EDSAC (Electronic Delay Storage Automatic Computer—1949) two years before the EDVAC was even finished, challenging the claim that the EDVAC was the first stored-program computer.

EDSAC was a large-scale electronic calculating machine using ultrasonic delay units for storing numbers and programs.

It operated using a serial process working in a scale of two–binary. Punched tape was used for input and a teleprinter was used for output. These were important innovations.

In 1948, Frederick Williams, Geoff Toothill, and Thom Kilburn, who worked at the University of Manchester, created the Manchester Small-Scale Experimental Machine, nicknamed “Baby” to test a theory about high-speed, electronic computer storage using a cathode ray tube.

Frederick Williams’ idea was to create an electronic method to read information or “charge” and then rewrite it continuously so it could be stored permanently; a process called regeneration.

It worked!

It became the first computer to use what would become known as Random Access Memory (RAM).

In 1950, a computer was built in Washington, DC called SEAC (Standards Eastern Automatic Computer) which became the first computer in the US to used stored programming.

It was built for the US National Bureau of Standards (now National Institute of Standards and Technology) and was used to test and set scientific measurement standards in the US.

Foundations for future computer technology had been laid.

How do we take computers out of universities and government offices and put them in the hands of business and even ordinary folk?

Mauchly and Eckert moved on to a new challenge.

Their goal was to create the first commercially available computer starting in 1946—the UNIVAC (Universal Automatic Computer).

Although originally designed by Eckert and Mauchly, they sold their company to Remington Rand, due to financial difficulties.

It would be Remington Rand who then completed UNIVAC 1 in 1951—the first computer used by the U.S Census Bureau.

The UNIVAC was a giant computer weighing 16,000 pounds and using 5,000 vacuum tubes, which could perform up to 1,000 calculations per second.

It was designed to input data using magnetic computer tape. It used vacuum tubes to process data.

Tabulation results could be stored in the computer’s magnetic tape or printed using a separate magnetic tape printer.

The room-sized design of these early computers made them costly to operate.

Vacuum tubes didn’t help considering they used huge amounts of electricity which generated heat leading to malfunction, brownouts, or risk of fire.

These limitations prevented anyone but universities and government agencies from venturing into purchasing these machine. Yet, the demand was there.

Commercial options would remain unavailable to most until scientific developments caught up with practical application.

In 1954, IBM would attempt to fill this void by creating its first mass-produced computer, the IBM 650. They would sell 450 of them in its first year.

Yet, the size and expense of operation would remain largely because of the reliance on vacuum tubes.

Problem to be Solved: Now that we have created computer systems, how do we make them smaller, more user-friendly, and less expensive to run? Would it be possible to create a computer language that used words rather than binary code?

As we have seen, each development built upon the work of others, and this next phase of computer development would be no different.

And as it always seems to happen these great problem solvers would be working on ways to improve something else.

In this case Bell Laboratories wanted to get away from vacuum tubes and sought an alternative hoping to improve the Bell telephone network.

They put three scientists on the case. —Walter Brattain, William Shockley, and John Bardeen.

Their focus became semiconductors. Basically, a material that can act as both a conductor of electricity as well as an insulator.

Their goal was to control or amplify the flow of electrons more efficiently that a vacuum tube could.

What they created in their lab in 1947 was what would become known as a transistor.

Bell Labs would unveil transistors in 1948, but it would receive little fanfare because its intended use was for telephones, not computers.

And early transistors had some drawbacks.

The earliest units were incredibly expensive and did not work has fast as vacuum tubes at that point. There were also issues with construction that led to breakdowns.

However, they had true benefits. They switch on instantly, unlike vacuum tubes that took time to warm up. They were small and they used substantially less energy.

So, they soon found their place beyond telephone networks and into other important communication applications such as transistor radios.

However, as research continued, scientists found the ultimate benefit of transistors—the fact that electrons moved faster when there was less material to move through.

And, if one makes smaller semiconductors and integrate smaller circuits one could speed up computations.

The race became how to improve upon this invention and apply them to computers.

The information age had begun!

Key Innovation: Transistors!

Even though transistors did not play leading role in computers throughout the 1950s, it does not mean people were not trying to figure out how to integrate them into computers so they could get rid of those clunky, expensive vacuum tubes. In 1953, the first computer to use a transistor was built and used at the University of Manchester.

They built a second one in 1955 that worked even better, but still used vacuum tube components. It used a magnetic drum memory.

Also, in 1955, the British Atomic Energy Research Division built what would become known as the Harwell CADET. It operated without the vacuum tubes by operating at a lower clock frequency.

It would be considered the first transistorized computer in Europe.

In 1954, the US military using engineers from Bell Laboratories created a transistorized computer called TRADIC (Transistorized Digital Computer).

What blew people’s minds away with this one was that it was only three cubic feet in size; tiny when compared with previous computers.

In 1956, scientists at MIT would create the TX-0 (Transistorized Experimental Computer) which used a magnetic-core memory.

And, there would be other efforts along these lines, but what would begin the end of the vacuum tube for use in computers was the introduction of IBMs 1401 Data Processing Center in 1959, which used no vacuum tubes at all, only transistors.

IBM had designed it with business applications in mind.

By 1964, IBM would sell over 10,000 of them.

It is important to note that many of the largely experimental transistor-powered machines used magnetic tapes and disks for secondary memory, and magnetic core for primary.

The magnetic core memory was a kind of computer memory device that stored instructions on computers and consisted of a large array of hard magnetic material that could be magnetized in two directions.

One of the key components in memory devices was developed by Jay Forrester at MIT through a US government funded project—a donut-shaped magnetic core.

Innovations to improve computer memory also continued throughout the 1950s as technological needs changed to adjust to new developments.

By the end of the 1950s most computers used magnetic-core memory arrays.

Parallel with the refining of the transistor for computer use came the development of computer programming languages for use by non-scientists.

In fact, during the 1950s the creation of programming languages became one of the new big frontiers in computer technology.

One of the earliest and one of the most important innovations occurred in 1953 when John Backus, who worked for IBM, proposed to his bosses an idea to create a standard computer programming language for the IBM 701 computer, one of the first general and business use computers created.

He and his team worked on this new language that they initially called Formula Translation, but it would eventually be compressed into FORTRAN, which became commercially available in 1957 and still remains in use today.

In 1954 Grace Brewster Murray Hopper, who was a Navy Lieutenant Commander at the time and one of the first programmers for the Harvard Mark I computer, was selected by Eckert-Mauchly Computer Corporation to head up their department for programming.

She had worked with them before on their UNIVAC project.

In 1959, she participated in the Conference-Committee Data System Languages (CODASYL) as an adviser.

This resulted in the development of the first computer language that used English-like words instead of numbers to instruct and program a computer. It eventually came to be known as Common Business Oriented Language (COBOL) and was introduced to the public in 1961.

It would become one of the first user-friendly computer programming languages available for business use.

The race to improve upon the transistor culminated in 1958.

Two scientists, working separately developed designs where they merged not only transistors, but also other computer components into one unit—the integrated circuit, also known as a microchip.

Jack Kilby working for Texas Instruments and Robert Noyce working for Fairchild Semiconductor Corporation developed similar systems, but slightly different designs.

Kilby focused on the use of a wafer of germanium where he attached several components used in electronics and key for computer operations—resisters, capacitors, and even an oscillator; all of which were made of silicon and connect via gold wires.

Noyce’s design was based upon his idea of what he called the “planar process” which literally imbedded the components into a substrate of silicon overlayed with an evaporated metal film.

It is this metal film that created the electrical connections.

Although Kilby would win the patent, it would be Noyce’s design that became the standard.

The microchip would become commercially available in 1961 and all future computers would use integrated circuits.

One of its first uses was in US Air Force computers and the Minuteman Missile.

Key Innovation: Integrated Circuits!

Integrated circuits started to replace transistors and totally ended the domination of vacuum tubes. Thousands of miniaturized transistors were put into silicon chips called Integrated circuits. Integrated circuits were more efficient and operated faster because they were extremely small in size, consumed less electrical power, and could be replaced easily.

Instead of using punch-cards and printouts, users would begin to interact with computers using monitors and keyboards based upon the teletype model that interfaced with an operating system. This allowed computers to run several applications at the same time using a central program that monitored memory. As computers became smaller and cheaper and their efficiency and speed greatly increased, they became more popular and a huge surge of users emerged during the 1960s.

With so much technological development happening through the 1940s and 50s, visionaries began to shift their ideas to even broader applications for computers than just basic computation.

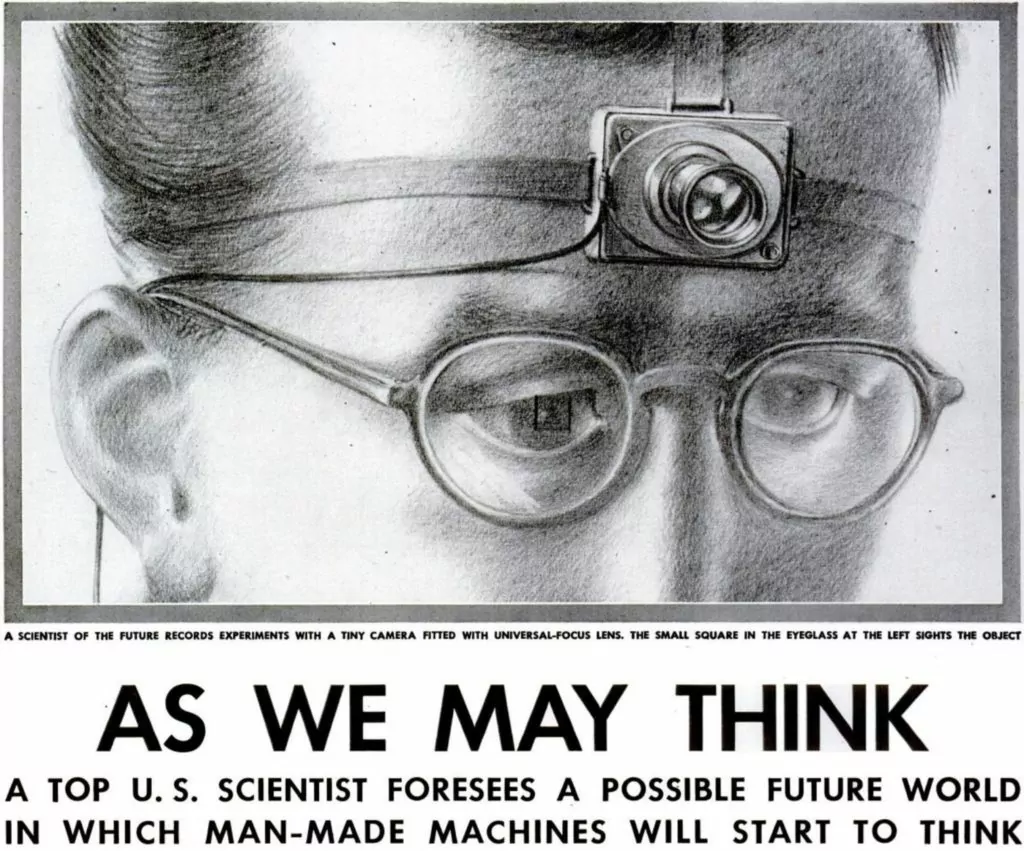

As early as July 1945, Vannevar Bush wrote a ground breaking article in Atlantic Monthly titled “As We May Think.”

In this article, he discussed how information processing units could be used in peacetime.

He anticipated a glut of information that would overwhelm current systems unless we found a way to manage it.

He introduced the idea of a system called Memex that could not only manage information, but also allow humans to exploit it, including the ability to jump from idea to idea without much effort.

In a way, he anticipated the World Wide Web.

Memex was never built.

Yet, as with all ideas ahead of its time, it hung around until its time would come.

In 1948, one man would set the stage to change how we think about the transmission of all information—from mathematical and language to music and film—in terms of digital media.

In his article, “A Mathematical Theory of Communication” in the Bell System Technical Journal, Claude Shannon introduced four major concepts that set the groundwork for what would become modern information technology and artificial intelligence. By the way, learn all about the history of artificial intelligence here.

- In the first point, he offered a solution for the problem of noisy communication channels, what would become known as the “noisy channel theorem.” He found a way not only to increase the speed of transmission, but also decrease the noise and error during transmission. The key was encoding information and including redundancy in the information to decrease the probability of error. This theorem would also become known as the Shannon Limit. Basically, it is channel capacity; the limit you can send data through a channel depended upon the given bandwidth and noise level.

- He introduced the architecture and design for communication systems by demonstrating that the system could be broken down into components.

- Shannon realized that for transmission purposes it didn’t matter what the content was. It could be in any format. It just had to be encoded into “bits”—the first time this word was ever used. He defined a “bit” as a unit of measurement for measuring information.