Here are the differences between a 1366×768 pixels resolution and a 1920×1080 pixels resolution and which is better:

1920×1080 is usually better because it’s a higher resolution and provides better image clarity.

There are reasons to choose a lower resolution, such as to save computational power.

Many displays can adjust the screen resolution below their maximum, so having a higher maximum resolution gives you more control.

So if you want to learn all about whether 1366×768 pixels or 1920×1080 pixels is the better resolution for you, then this article is for you.

It’s time for the first step!

What Do the Resolution Numbers 1366×786 and 1920×1080 Mean?

The numbers in a screen resolution refer to the number of pixels used to produce an image within that resolution. More specifically, the resolution depicts the number of rows and columns (in that order) of pixels that will be on a screen.

In a raw sense, 1920×1080 resolution has double the number of pixels when compared to 1366×768.

What does it mean to have more pixels? This yields two results. One relates to image clarity, and the other relates to dots per inch (or DPI). Let’s talk about image clarity first.

Increasing the number of pixels in the image increases the sharpness and clarity of the image. The easiest way to understand this is in image enlargement.

If you zoom in on an image enough, it eventually becomes blurry. You can try it on your phone right now. Take a picture and see how far you can zoom in before the image quality suffers.

Any image will fall victim to this inevitability, and it has to do with pixels. More pixels create more data for the image, and that allows you to zoom in closer before you get blurriness or distortion.

There is a famous picture you can access online that used 120 Gigapixels in its formation. It allows unprecedented amounts of zooming, and you can play with it here.

What does all of this mean for a screen resolution? Larger screens benefit from higher resolutions. If you try to watch a low-definition video on a 50-inch TV, you’re going to see the problem. So, any chance to increase screen resolution helps when images are enlarged.

But, if the screen isn’t very large, the image improvements you get from higher resolutions can be hard to notice with the naked eye. What’s more is that high resolution impacts more than just image clarity, and it’s important to take everything into consideration.

One of the other aspects impacted by screen resolution is DPI. This refers to the number of dots that can fit in an inch of the screen in any direction.

When watching a video or looking at images, DPI and screen resolution really mean the same thing. When you use a computer, you probably control things with a mouse. This is where DPI takes on its own meaning that is worth learning.

The mouse has to travel across every single dot in each inch in order to move across your screen. If you have a higher DPI, it takes more motion from the mouse controller to move the cursor on the screen.

Put more simply, with a traditional mouse. You have to move your arm farther to get the mouse on the screen to respond.

So, is a higher or lower DPI better for your mouse? That really depends on the application. If you need very precise mouse movements (such as for gaming or picture editing), then a high DPI is usually better.

If you’re trying to move the mouse faster, a lower DPI can be beneficial. It’s a matter of preference.

But, there’s a bottom line. Having more pixels to cover increases the time it takes to move your mouse across the screen. At the same time, that improves mouse accuracy. There are mouse settings that can also be adjusted to compensate for this, so you can customize your experience.

In the mouse settings, you will typically see a DPI setting. This is technically referring to something different from the number of dots on your screen, and it has an inverse effect.

In these settings, a higher DPI makes the mouse move faster, which should not be confused with the DPI that are determined by the screen resolution.

Which Resolution Is Better When Comparing 1920×1080 and 1600×900?

When comparing 1920×1080 pixels and 1600×900 pixels as resolutions for your laptop monitor or screen, how does that compare?

Among the two resolutions, 1920×1080 has more pixels, so it is the better resolution.

In general, more pixels per square inch improves the clarity of an image. This is especially evident when you zoom in on an image or scale it up.

Learn all about the differences between 1920×1080 pixels and 1600×900 pixels and how which is the better resolution for you here.

What Are Aspect Ratios and Do They Matter?

The numbers in a screen resolution determine the aspect ratio. This is a term that describes the shape of the screen or the image. If you remember old CRT TVs, they were square.

Their aspect ratio was 1:1, meaning the vertical and horizontal lengths of the screen were the same. Meanwhile, most filming is done in a rectangular aspect ratio, so square TVs had to cut part of the image or blackout part of the screen to make everything fit.

Both 1920×1080 and 1366×768 are 16:9 aspect ratios. They are rectangular and the horizontal is longer than the vertical by almost a ratio of 2:1. The differences in the aspect ratio between these two are subtle, and in most cases, you won’t notice any meaningful difference.

The exception occurs when you use multiple screens. If you mix and match screen resolutions for a multiple display setup, having some at 1920×1080 and others at 1366×768 will cause subtle but noticeable differences. The screens will have to make adjustments, and you will end up with small shape distortions in the images.

For many applications, these differences are small enough to not matter, but if you need high-precision image clarity or are someone who notices and is bothered by these differences, it can be a problem.

1366×768 vs. 1920×1080: How Do Resolutions Impact Performance?

We’ve talked about how screen resolutions control the visuals that you see, but they also play a part in what happens behind the scenes. The device using the screen has to send a signal to every single pixel in order to properly form an image. When you’re playing video or otherwise changing images in real-time, that can constitute many signals for a high-resolution screen.

In other words, it takes more processing power to control a screen with more pixels. Since a high resolution can be more demanding, you might max out the video processing resources when you push the envelope of what a computer (or advice) can do.

In this case, a higher resolution can be a liability. You might get cleaner images, but you can sacrifice your frame rate as a cost.

What is frame rate?

This is the speed at which your screen is showing you new images. To put it in perspective, Hollywood movies are usually displayed at 60 frames per second (FPS).

At this frame rate, you can watch a movie in a theater, and it will look normal. If you watch that same movie on your computer and the frame rate drops to, say, 20 FPS, the video will look very choppy.

Now, frame rate usually isn’t an issue when watching a movie. That example just helps with visualization. In reality, the two functions on a computer that see frame rate problems tend to be gaming and video editing. These push the limits of video processing pretty hard, and they can exceed those limits, which results in a dropped frame rate.

When frames are dropping, one of the best solutions is to use a lower screen resolution. Since 1366×768 has basically half the number of pixels, it dramatically lowers the amount of computation the computer has to do to keep up with video demand. This is the case where a lower resolution might be preferable.

1366×768 vs. 1920×1080: Which Is Better for Laptops?

Not all laptops are the same, so you might be able to find specific exceptions to the following generalizations. That said, most modern laptops are built with a native resolution of 1920×1080.

That means they default to this resolution and are designed around it. As you can imagine, it suggests that this is the best resolution for laptops, but we can cut a little deeper into the topic.

Typically, higher resolutions are better for image quality on large screens. A 17-inch laptop is considered to have a large screen by industry standards.

Compared to stand-alone monitors and TVs, that’s not very big. What that means is that you are unlikely to see a major difference in image quality regardless of which of these two resolutions you pick.

Similarly, most of the programs you would run on your laptop won’t tax it enough for the resolution to impact performance, so you have a lot of freedom here.

The one situation where the resolution really matters is when you attach an external monitor to your laptop. In that case, the higher resolution will be more noticeable with a larger screen, and most people prefer 1920×1080.

Here’s the bottom line. Laptops usually default to 1920×1080 and run better on it.

You can drop to 1366×768 if you want, but in either case, you are unlikely to notice any real difference between the resolutions unless you plug a larger monitor into the laptop. In that case, you can treat it just like any other PC, and the higher resolution is usually better.

1366×768 vs. 1920×1080: Which Is Better for Computer Screens?

Computers have a default screen resolution, and it is usually 1920×1080. Everything on the computer is designed for that default, so in the vast majority of cases, it’s the better option. There are two notable exceptions—the first we just covered together. If you need to save computational power, take the lower resolution.

The second exception is when 1920×1080 is not the default for a computer. Older computers default to lower resolutions, and some newer resolutions are starting to default to resolutions higher than 1920×1080.

In either case, the native resolution (or default resolution) will display everything at the size and shape intended by the developers. This can improve your experience.

But, if you want more clarity or more DPI, then 1920×1080 is always going to be better than 1366×768.

1366×768 vs. 1920×1080: Which Is Better for TVs?

TVs are a different story from computers. On a TF, high resolution is basically always better. You get a sharper image and the option to play higher definition videos.

Since the TV doesn’t have to do much processing, you’re not worried about frame rates. Frame rates will be determined by the player or streaming service, and they are all capable of handling the processing power needed to use your TV at 1920×1080 resolution.

There is an exception, and that is when your TV is being used as a computer monitor. In that case, refer to the recommendations in the previous section.

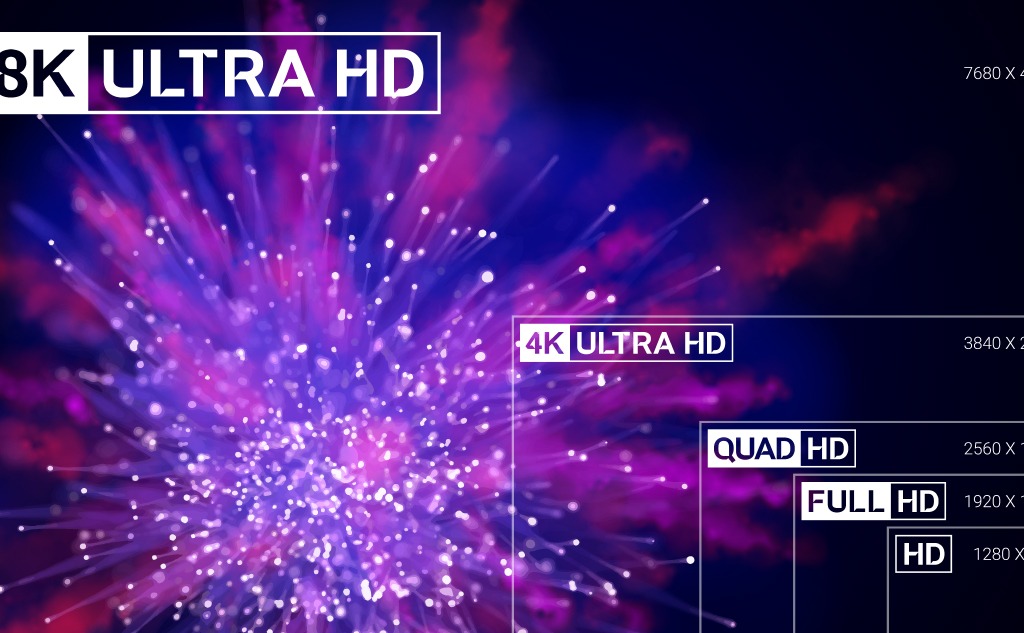

Let’s really drive this point home. Most new TVs operate in the 4K range. To put that in perspective, 4K video operates with a resolution of 3840×2160. That’s four times the pixel count of 1920×1080 (hence the name 4K).

Even with this massive increase in pixel count, 4K TVs are not known for dropping frames. Playback is fine, so you can prioritize higher pixel counts and resolutions for a TV.